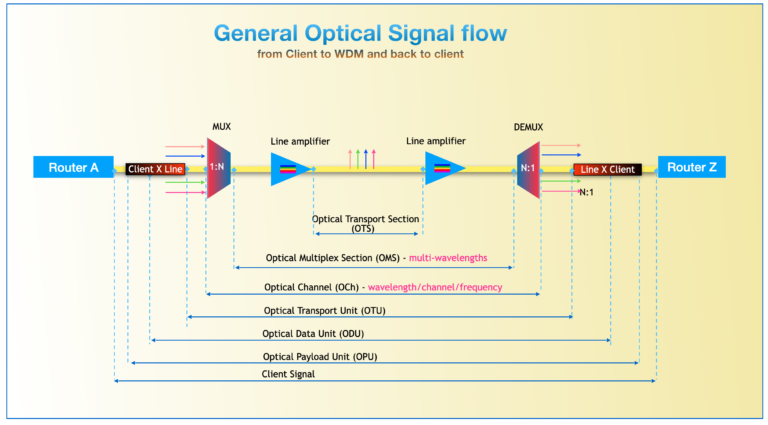

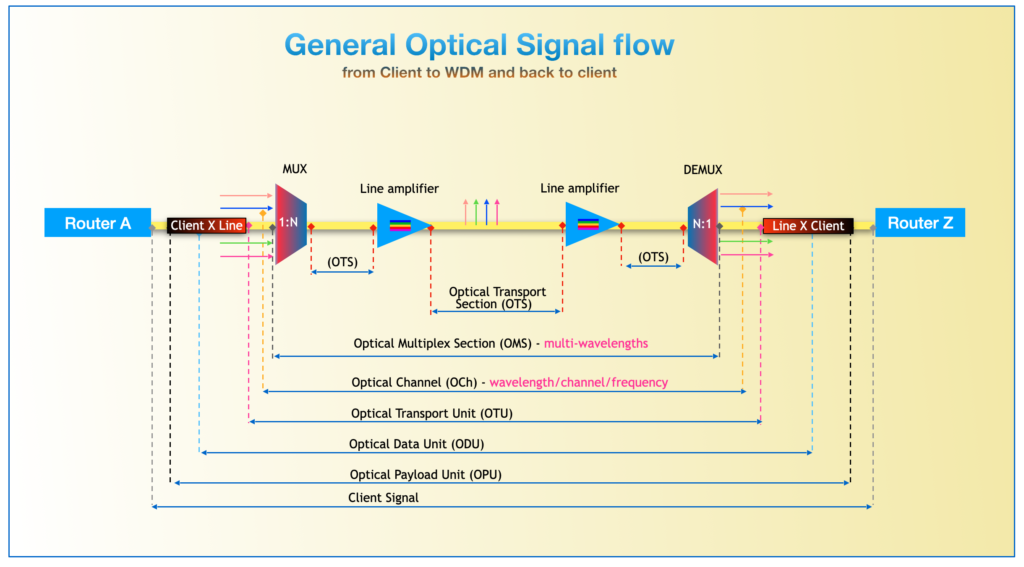

Recently I came across a non-optical background candidate who asked me what are OTU, OCH, ODU, etc in optical. So I came up with this diagram which helped him to understand in the simplest form and hope it will help many others too.

The above image shows how a signal received from the router is sent over the Optical layer and then received back at another end. It consists of a few terminologies that are:-

Client Signal

Client Signal is the actual payload that is to be carried over the optical layer and is generated from a router mostly and feed into client ports of an optical card called a transponder or muxponder. The client signal or actual payload to be transported could be of any existing protocol such as SONET/SDH, GFP, IP, and GbE.

Optical Channel Payload Unit (OPU)

Client signal alongwith added overhead OH makes an OPU.OPU supports various types of clients and provides information on the type of signal transported over the next layer. The ITU-T G.709 currently supports asynchronous as well as synchronous mappings of client signals into the payload.

Optical Channel Data Unit (ODU)

OPU alongwith overhead makes ODU. Added overhead allows users to support Path monitoring (PM), Tandem connection monitoring (TCM), and Automatic Protection Switching (APS).

Optical Channel Transport Unit (OTU)

ODU with OH and FEC(Forward error correction) makes OTU. OTU is used in the OTN to support transport via one or more optical channel connections. FEC enables the correction and detection of errors in an optical link.

Optical Channel (OCH)

OTU with FEC another overhead makes OCH.OCH is generally a frequency/wavelength/color that will be sent to a MUX multiplexer where different channels with be multiplexed to be carried over a single strand of optical fiber.

Optical Channel Multiplexing Section (OMS)

Once the optical channel is created, additional non-associated overhead is added to each OCh frequency and thus OMS is formed. This is the output of mixed signals called composite signal on MUX, multiplexer. This is between MUX to DEMUX.

Optical Transmission Section(OTS)

The optical transmission section (OTS) layer transports the OTS payload as well as the OTS overhead (OTS-OH). The OTS OH, although not fully defined, is used for maintenance and operational functions. The OTS layer allows the network operator to perform monitoring and maintenance tasks between the Optical Network elements, which include: OADMs, multiplexers, de-multiplexers, and optical switches.

For more details , read on https://www.itu.int/ITU-T/recommendations/rec.aspx?rec=7060&lang=en