MapYourTech | InDepth Series

MapYourTech | InDepth Series

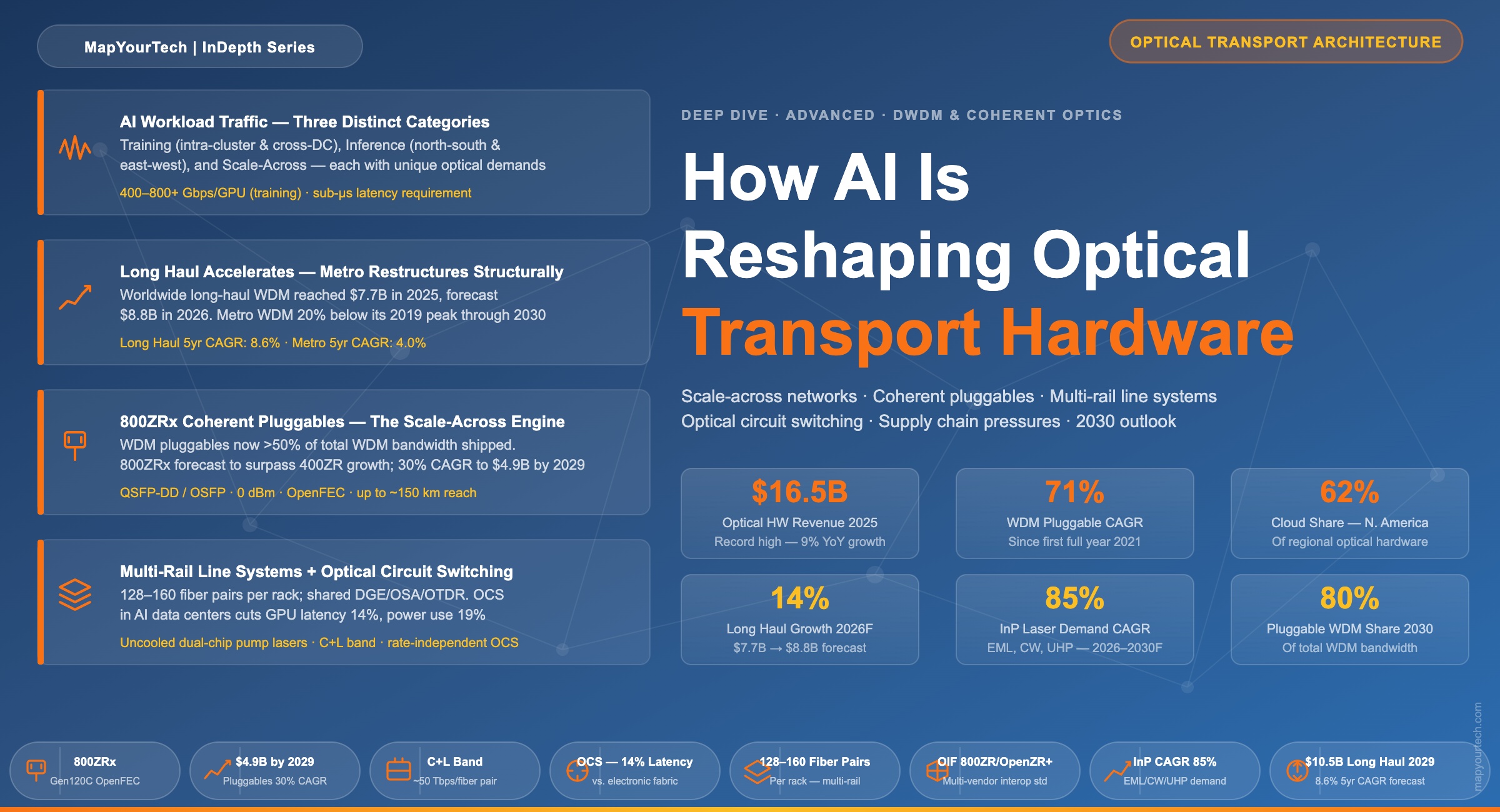

How AI Is Reshaping Optical Network Architecture and Transport Hardware

From GPU interconnect inside the data center to petabit-scale inter-region backbones — artificial intelligence workloads are rewriting the rules of optical transport. This deep dive examines the structural shifts already underway and the technology changes enabling them.

Introduction

Optical transport networks are infrastructure that most people never think about — until an AI model somewhere needs to move a trillion tokens between data centers at the speed of light. That moment of reckoning arrived decisively in 2024 and 2025, and the optical hardware industry has not been the same since.

For three decades, the primary buyers of long-haul dense wavelength division multiplexing (DWDM) equipment were telecommunications carriers connecting cities and countries. Hyperscalers — the operators of large-scale cloud computing infrastructure — participated in this market, but mainly at the metro layer. That relationship has been inverted. As of 2026, cloud operators and co-location providers account for 30% of worldwide optical hardware spending, and in North America that share reaches 62%. They are now the dominant force driving product roadmaps, procurement volumes, and the architectural evolution of the entire optical transport industry.

Artificial intelligence is the engine behind this shift. Training large language models and other foundation models requires keeping thousands — soon tens of thousands — of Graphics Processing Units (GPUs) in near-constant communication with one another. Moving the resulting volumes of data between GPU clusters, across data centers, and over long-haul backbone routes demands optical capacity at a scale and with a performance profile that the industry had not previously been asked to deliver. The hardware is responding: new form factors, new optics generations, new line system architectures, and a stressed but expanding supply chain are all part of the story.

This article examines what AI workloads actually demand from optical networks, traces the structural market shifts that have followed, and explains the technology changes — coherent pluggable optics, multi-rail line systems, optical circuit switching, and disaggregated compact modular hardware — that are enabling this new era of transport.

What AI Workloads Actually Demand from Optical Networks

Understanding the optical transport implications of AI requires separating the distinct traffic types that AI workloads generate. Each has very different latency, bandwidth, and resilience requirements, and each places a different demand on the network.

2.1 Training Traffic: Intra-Cluster and Cross-Site

Large-scale model training distributes computation across many GPUs simultaneously. During the training process, GPUs exchange gradient updates and synchronization data in all-reduce operations — a pattern of communication in which every GPU must send data to and receive data from every other GPU in the cluster. This type of traffic is extremely latency-sensitive: even microseconds of additional delay translate directly into GPU idle time, wasted compute cycles, and longer training runs.

At the intra-cluster level (within a single data center), bandwidth requirements reach 400 to 800 Gbps per GPU for very large models. Latency targets are measured in sub-microseconds. When training is distributed across multiple data centers — a practice increasingly adopted as model sizes exceed what a single facility can host — checkpoint transfers and model-state synchronization create large, periodic bursts of traffic between sites. These flows are delay-tolerant relative to intra-cluster synchronization but still require very high bandwidth because the payloads (model weights and optimizer states for frontier models) can be hundreds of gigabytes per checkpoint event.

2.2 Inference Traffic: North-South and East-West

Inference — the process of running a trained model to generate outputs in response to user requests — produces two distinct traffic patterns. North-south traffic flows between users and the data center, carrying prompts and responses. End-to-end latency targets are typically under 100 milliseconds for interactive applications; even a few hundred milliseconds of additional network delay is perceptible to users. East-west traffic within the data center coordinates multi-GPU inference, including KV-cache sharing and model sharding across accelerators.

| Traffic Scenario | Type | Latency Requirement | Bandwidth Requirement | Traffic Character |

|---|---|---|---|---|

| Intra-DC Training (GPU-to-GPU synchronization) | GPU-to-GPU all-reduce | Sub-microsecond | 400–800+ Gbps per GPU | Deterministic, iteration-driven |

| Cross-DC Training (checkpoint and state sync) | Checkpoint transfer, model-state replication | Important (RPO/RTO impact) | High — periodic large bursts (tens to hundreds of GB) | Scheduled, predictable bursts |

| Inference — North-South (user traffic) | User prompts, context retrieval, token streaming | Under 100 ms | Low to moderate per request | User-driven, QPS-shaped |

| Inference — East-West (intra-DC GPU coordination) | KV-cache sharing, model sharding | Critical (token speed) | Moderate, rising with multi-GPU inference | Stable intra-session patterns |

The Network Consequence: AI workloads require optical networks that are simultaneously deterministic (training cannot tolerate jitter), high-bandwidth (hundreds of Gbps per GPU at scale), and low-latency (inference chains depend on fast path completion). No single architecture satisfies all of these at once — which is why different optical solutions are emerging for intra-data center, data center interconnect, and long-haul transport layers.

2.3 Scale-Across: A New Traffic Category

Beyond the established training and inference patterns, a third category has emerged as hyperscalers build what the industry calls "scale-across" networks. These are dedicated optical interconnects linking GPU clusters housed in geographically separate data centers — sometimes tens of kilometers apart, sometimes hundreds — so that they function as a single logical compute fabric. Scale-across traffic combines elements of training and inference: it needs the throughput of training traffic but the operational continuity of production services. The optical capacity requirements are extraordinary: individual scale-across networks are expected to consume several hundred fiber pairs between any two data centers, totalling many thousands of fiber pairs across a continental deployment. This single application is driving a new category of line system hardware and is the primary force behind the surge in long-haul optical investment.

The Market Bifurcation: Long Haul Accelerates, Metro Restructures

The most consequential structural shift in the optical hardware market is the divergence between the long-haul and metro WDM segments. This divergence is not cyclical; it reflects a durable change in where and how optical capacity is being consumed.

3.1 Long Haul: A Market Rewritten

The worldwide long-haul WDM market grew 11% in 2025 to reach $7.7 billion. That growth rate accelerates to a forecast 14% in 2026, taking the segment to approximately $8.8 billion. By 2029, long-haul revenue is projected to reach $10.5 billion — $1.5 billion higher than analysts had forecast just one year earlier, with the 5-year compound annual growth rate revised upward from 5.4% to 8.6%.

The primary driver is hyperscaler data center interconnection for AI training and inference. North America is the epicentre of this expansion. Long-haul spending in the region surged 56% in 2025 as hyperscalers accelerated inter-city data center interconnection. Excluding China from the analysis, the global long-haul market grew 27% in 2025 and is forecast to grow a further 19% in 2026. Long-haul now benefits from a structural advantage over the metro segment: it is largely insulated from the cannibalization effect of IP-over-DWDM (router-hosted pluggable optics that bypass traditional metro optical systems). Ultra-long-haul and high-performance routes still require embedded, purpose-built coherent interfaces — exactly what major optical vendors supply.

WDM segment revenue comparison

Bar chart comparing Long Haul, Metro WDM, SLTE, and WDM Pluggables revenue from 2022 through 2026 forecast

3.2 Metro WDM: Structural Headwinds

The metro WDM segment tells a different story. At $6.4 billion in full-year 2025, metro WDM revenue remains nearly 20% below its 2019 peak of $7.9 billion — a level it is not forecast to recover through 2030. Growth in the metro segment is expected at just 3 to 5% annually, with a 5-year compound annual growth rate of 4%. Two structural forces are at work.

The first is IP-over-DWDM: cloud operators have made router-hosted coherent pluggable optics their default architecture for data center interconnect, bypassing traditional metro optical transport systems entirely. This represents a spending shift of $2.5 billion out of traditional optical equipment in 2024 alone, captured instead in router hardware budgets and the new standalone pluggable segment.

The second force is the evolution of the pluggable interface itself. As 800ZRx modules become available in volume in 2026, their use in pluggable transponders will become the most cost-effective approach for aggregating and transporting 100G and 400G client traffic. The economic logic of the dedicated metro optical system faces sustained pressure as the pluggable becomes a credible alternative across an increasing range of reach and capacity requirements.

The defining divergence: By 2029, long-haul revenue is forecast to exceed metro WDM revenue by $2.9 billion — a 38% gap. In the prior forecast (just one year earlier), that gap stood at $2.0 billion (29%). The divergence is widening, not narrowing, and it is driven entirely by the concentration of hyperscaler AI investment in long-distance optical infrastructure.

3.3 Submarine and WDM Pluggables: Growth at the Edges

The submarine line terminal equipment (SLTE) segment is also benefiting from AI. After six consecutive years in a narrow $490–550 million annual revenue band, SLTE is forecast to break higher — growing at a 7% compound annual growth rate from $540 million in 2025 to $740 million by 2030. Hyperscalers now represent the vast majority of new submarine cable capacity, connecting global data centers across ocean routes. Geopolitical pressures — including cable sabotage incidents and route concentration risk in the Red Sea — are also spurring new cable deployments across diverse geographic paths.

Standalone WDM pluggables, newly tracked as an independent market segment as of early 2026, add $1.8 billion to full-year 2025 optical hardware revenue and are forecast to reach $4.9 billion by 2029 at a 30% compound annual growth rate. This single segment lifts the total optical hardware market 5-year growth rate from 6% to 10%. It is now the single largest driver of incremental revenue growth in the optical hardware market.

The Pluggable Revolution and Its Structural Impact

The coherent pluggable transition is arguably the most disruptive architectural development in optical transport since the introduction of DWDM itself. Understanding it requires tracing how hyperscalers engineered it into existence and what it means for the rest of the market.

4.1 How 400ZR Was Born

Around 2015, hyperscalers identified that the optical transport industry's model — proprietary, vertically integrated systems built for telecommunications carriers — was incompatible with how they wanted to operate. They wanted to procure optical interfaces as interchangeable commodity components, disaggregated from host platforms, interoperable across vendors, and upgradeable independently of the rest of the system.

They applied this influence to drive the development and standardization of 400ZR through the Optical Internetworking Forum (OIF). Volume shipments began in 2021. Since then, more than 1.5 million 400ZRx optics have been sold — the fastest ramp of any coherent optics generation in the history of the industry. As of 2025, excluding the Chinese market (which uses different form factors), pluggable DWDM optics out-shipped embedded optics by more than two-to-one on a unit basis.

4.2 The 800G Generation and AI Scale-Across

800ZRx — the 800G equivalent in the pluggable coherent family — represents the current centre of gravity for hyperscaler deployment. Initial analysis suggested 800G might be a relatively short-lived stepping stone between 400G and a future 1600G generation. That view has been revised substantially. The scale-across opportunity has caused 800ZRx demand forecasts to be revised upward multiple times. 800ZRx is now expected to surpass 400ZR's growth trajectory, limited primarily by the industry's capacity to supply rather than by demand.

The OIF standard 800ZR offers base performance. Hyperscalers predominantly prefer 800ZR+ (also called Gen120C), which uses higher-performing Open Forward Error Correction (OpenFEC) and vendor-specific proprietary modes. 800ZR+ extends inter-data center connectivity to approximately 150 km — covering the geographic range of most scale-across deployments — while a 400G ultra-long-haul mode enables use of the same module on more challenging routes, allowing network operators to standardize on a single interface type across a wider range of applications.

IP-over-DWDM pluggable coherent modules in router-hosted applications represented $1.5 billion in 2024 revenue, of which $1.25 billion came from cloud operators. This category is forecast to grow at a 29% compound annual growth rate to exceed $5 billion by 2029.

4.3 Looking Ahead: 1600ZRx and 100ZR

The next generation, 1600ZRx (Gen240C), may represent a structural turning point. It will likely be the first coherent generation in which the pluggable form factor (Gen240C) reaches the market before the embedded embedded-optic equivalent (Gen240P) at a comparable baud rate. As the addressable market for embedded optics narrows — squeezed by improving pluggable performance on one side and the economics of hyperscaler procurement on the other — 1600ZRx could become the generation at which a universal, standardized pluggable module serves the full range of metro, regional, and potentially long-haul terrestrial routes.

At the other end of the spectrum, 100ZR (a 100G coherent pluggable in QSFP28 form factor) has also gained attention. At 0 dBm transmit power, it supports links from 160 km point-to-point to 500 km with in-line amplification. This enables 100G coherent connectivity using the same small, low-power form factor used by standard client optics — a useful option for service providers and enterprises that need coherent performance at lower capacity points without the cost of a traditional transponder.

IP-over-DWDM pluggable revenue forecast

Stacked bar chart showing growth in IP-over-DWDM pluggable revenue from 2024 through 2029, broken down by 400ZR, 800ZR, and 1600ZR generations

Scale-Across: The New Architectural Imperative

Scale-across networking is the concept that has captured the attention of every optical transport equipment supplier in 2026. It refers to the construction of dedicated, ultra-high-capacity optical interconnects that allow GPU clusters in separate data centers to operate as a unified compute fabric — as if they were in the same building.

5.1 Why Scale-Across Is Different

Traditional data center interconnection is designed around traffic that flows into and out of a data center — user requests, cloud storage access, replication traffic. Scale-across networks carry a completely different category of traffic: all-reduce gradient synchronization between GPU accelerators that happen to be in different buildings. The volume, the pattern, and the sensitivity of this traffic are all different from anything the optical transport industry had previously been asked to handle.

The per-fiber capacity of a modern long-haul DWDM system operating in the C and L bands using 16QAM modulation approaches approximately 50 terabits per second. Scale-across networks are anticipated to consume several hundred fiber pairs between any two data centers — meaning a single scale-across deployment between two sites could require more fiber capacity than some national backbone networks. Across a continental hyperscaler deployment, fiber pair counts in the thousands are realistic.

5.2 Network Architecture for Scale-Across

Early scale-across deployments are being built between data centers within approximately 150 km of one another — within a metropolitan region or a corridor between adjacent metro areas. At these distances, in-line amplification is generally not required, which simplifies the line system significantly. The first such deployments use existing line system platforms without multi-rail integration.

As scale-across expands to cover longer distances and more geographically diverse data center locations — crossing state boundaries, connecting different climate zones, eventually spanning continents — in-line amplification becomes unavoidable. This introduces the amplifier hut problem: the legacy infrastructure of roadside amplifier sites built for traditional carrier networks, typically with limited rack space and power budgets of a few hundred amperes at 48V DC, cannot accommodate the hundreds of parallel fibers that scale-across demands. A purpose-built approach to in-line amplification is required.

The Amplifier Hut Constraint: Built along roadsides and railroad tracks decades ago, many in-line amplifier sites offer only a handful of equipment racks and limited available power. Amplifying the hundreds of parallel fibers anticipated by large-scale AI deployments demands a new approach to amplifier integration — one that dramatically increases fiber density per rack and per watt of power consumed.

5.3 Full-Band Transponders

A further architectural evolution closely associated with scale-across is the full-band transponder — a device that integrates client optics, line optics, multiplexing, and amplification within a single platform, designed to light up the complete spectral capacity of a fiber upon initial deployment. Eliminating per-wavelength provisioning reduces cabling complexity and operational overhead — both highly valued by hyperscaler operators who run networks with software-driven automation rather than manual configuration. Full-band transponders sit at the intersection of several technology trends: high-capacity coherent interfaces, integrated C+L band line systems, and the hyperscaler preference for operationally simple platforms that can be managed at scale.

Multi-Rail Line Systems: Engineering for Hundreds of Fibers

The scale-across opportunity has created a new product category: multi-rail line systems. These platforms consolidate the amplification of many parallel fiber pairs — "rails" in hyperscaler terminology — within a single line card or chassis, rather than treating each fiber pair as a separate line system requiring independent amplifier hardware.

6.1 The Problem Being Solved

A conventional optical line system amplifies a single fiber pair per chassis. For a scale-across network requiring 200 fiber pairs between two data centers, that means 200 separate conventional line systems — 200 racks of amplifier hardware — at each intermediate amplifier site. Given the space and power constraints of existing amplifier infrastructure, this is physically impossible. Multi-rail line systems address this by integrating the amplification of dozens to over a hundred fiber pairs within a single rack or chassis, using shared common equipment (control systems, optical spectrum analyzers, optical time-domain reflectometers) across all rails rather than replicating it per fiber pair.

The component ecosystem enabling this is centred on uncooled dual-chip pump lasers, which allow the high levels of amplifier integration needed to achieve multi-rail densities. These lasers are a key enabling component, and their supply is currently concentrated among a very small number of manufacturers — a fact with significant implications for the pace of scale-across deployment.

6.2 Platform Designs and Vendor Approaches

Multiple established optical transport vendors introduced multi-rail line system platforms at OFC 2026 in March, confirming that this product category has reached commercial reality. The competitive approaches illustrate different trade-offs between density, modularity, and form factor.

| Approach | Form Factor | Claimed Fiber Pair Density | Notable Features | Expected Availability |

|---|---|---|---|---|

| Disaggregated 1RU approach | 1RU pizza-box, standalone per amplifier site | Up to 160 fiber pairs per rack | Maximizes density; designed for data center integration | 2H 2026 indicated by one vendor |

| Chassis-based modular approach | Larger chassis with common equipment | 128 fiber pairs per chassis | Integrated terminal equipment option; supports both 600mm and 300mm depths; C+L-band capable | 2027 for most vendors |

| All-in-one small chassis | 1RU, four-rail integration | 4 fiber pairs per 1RU | Component supplier demonstrating platform approach; shared DGE, OSA, OTDR across four rails | Available as reference design |

The differences between platforms are likely to matter more in specifications documents than in actual deployment experience. Hyperscalers sourcing these systems are primarily concerned with supply diversity and delivery capacity rather than performance differentiation between competing products. They will apply multi-source procurement — qualifying multiple vendors to supply equivalent systems — as they do in other hardware categories. This places pressure on vendors to deliver functionally interchangeable products while competing on the ability to manufacture and deliver at hyperscaler volumes.

6.3 C+L Band and the Spectral Efficiency Dimension

Integrated C+L band line systems — platforms that simultaneously support the conventional C band (approximately 4.8 THz) and the L band (an additional 4.8 THz of spectrum at longer wavelengths) — are an important complementary development. Many hyperscalers deploying scale-across infrastructure light up both bands to their full capacity upon initial system deployment, eliminating the need for a future capacity expansion upgrade. Integrated C+L systems have no incremental cost for L-band expansion once the hardware is installed, making this the economically efficient approach for operators who anticipate sustained traffic growth. The accelerating deployment of these systems is expected to contribute materially to long-haul optical hardware revenue growth through 2027 and beyond.

Optical Circuit Switching and the Intra-Data Center Frontier

While much of the optical transport market's attention is focused on the inter-data center scale-across opportunity, a separate but equally significant architectural evolution is occurring inside the data center: the adoption of optical circuit switching (OCS) for GPU fabric interconnection.

7.1 Why OCS Inside the Data Center

Conventional data center networks use electronic packet switching throughout — at the Top of Rack (ToR) layer, in the spine-leaf fabric, and at every aggregation level. As GPU clusters grow to tens of thousands of accelerators, the power, cost, and latency of electronic switching fabrics at AI scale become increasingly problematic. An OCS-based data center network replaces some or all of the electronic switching with all-optical connectivity — a reconfigurable optical fabric that can establish and release fiber connections between rack switches on a time scale of milliseconds.

The advantages demonstrated in early deployments are material. OCS-based networks can reduce GPU-to-GPU communication latency by approximately 14%, achieve roughly 19% lower network power consumption compared to a traditional three-layer electronic fat-tree topology, and reduce the number of optical modules in the network (improving reliability — one analysis indicated a 17% reduction in module-related failure rate). OCS is also rate-independent: a switch fabric that works with 400G interfaces also works with 800G and future 1.6T interfaces without hardware replacement, because the switching is performed in the optical domain on the physical fiber rather than on the electrical signals.

7.2 Commercial Deployment Status

As of early 2026, the primary production user of OCS technology at scale is one of the largest hyperscaler AI compute operators, where it is used in conjunction with custom AI accelerator chip architectures. A number of other hyperscalers and cloud operators are running trials and limited-scale deployments. OCS matrix sizes are reaching up to 1,000 ports on vendor roadmaps, enabling a single OCS unit to switch very large numbers of optical connections. The growing number of OCS suppliers — including established photonics component companies and newer entrants — reflects the market signal that this technology is approaching mainstream adoption within AI data centers.

OCS and the intra-DC optical opportunity: Optical networks are moving beyond backbone and metro into the data center itself. The combination of OCS for fabric interconnection and high-density pluggable optics at the rack level creates a fully optical data center network architecture — something that was conceptually appealing but technically and economically premature even five years ago. AI compute density is what finally makes the economics work.

Compact Modular Hardware and the Disaggregation Trajectory

The disaggregation that hyperscalers began applying to metro optical transport in the mid-2010s — replacing integrated vendor-specific platforms with modular, open, data-center-form-factor hardware — has now permeated every layer of the optical transport stack.

8.1 The Compact Modular Market

The worldwide share of optical transport hardware sales in the Compact Modular form factor reached 24% on a rolling 4-quarter basis as of late 2025, up from 19% a year earlier. Compact Modular platforms differ from conventional telecom hardware in several key respects: they use front-to-back airflow (matching data center cooling), operate at relaxed thermal ratings (40°C versus the 55°C of traditional telecom equipment), support AC power (in addition to or instead of DC), and are physically shallower to fit in data center racks. They separate functions that were historically integrated — switching, coherent transponders, line systems — into discrete, independently upgradeable hardware elements.

Line systems now represent a material and growing fraction of Compact Modular revenue. Disaggregated, modular line system platforms from established optical transport vendors illustrate the adoption of data center physical characteristics by what was once purely a carrier-focused product category.

8.2 Pluggable Transponders: The Next Disaggregation Step

Pluggable transponders — transport chassis that accept coherent pluggable optics as the line-facing interface rather than embedded proprietary optics — represent the next step in disaggregation. Service providers who cannot yet adopt IP-over-DWDM (because their router infrastructure does not yet support 800G coherent interfaces, or because they need OTN overhead functionality) can use pluggable transponders to gain access to 800ZR+ economics and performance within an optical transport platform.

The 400G generation of pluggable transponders saw limited uptake outside China, where they are widely used as uplinks for large OTN switching systems. The 800G generation is expected to change this picture. Carriers can deploy 800ZR+ optics within pluggable transponders now, accessing 800G regional distances and 400G ultra-long-haul distances on the same module — without waiting for the multi-year rollout of 800G-capable routers across their networks. This economic logic is expected to drive material growth in the pluggable transponder market from 2026 onward.

8.3 Co-Packaged Optics: The Frontier of Integration

At the far end of the integration spectrum lies co-packaged optics (CPO) — the physical integration of optical transceivers with switching silicon within the same package or at the package boundary, eliminating the electrical interface between the switch ASIC and the optical module. CPO dramatically reduces power consumption per bit at very high port densities and is expected to be important for future generations of AI switch fabrics.

CPO requires an external laser source, typically in the form of pluggable laser modules. The power output requirements of these laser sources are rising rapidly as CPO system designs scale: from initial 200–250 mW levels to current 400 mW units, with 800 mW "super-high-power" lasers in development that could enable much higher split ratios and reduce the number of laser modules required per system. The laser supply chain for CPO is distinct from the laser supply chain for coherent transport but shares some underlying technology — and is subject to its own capacity constraints as AI data center deployments accelerate.

Supply Chain Under Stress

The rapid acceleration of AI-driven optical hardware demand has caught the supply chain in a state that is uncomfortably familiar to anyone who experienced the post-pandemic semiconductor and optical component shortage of 2021–2023. Just three years after that crisis, supply pressures are re-emerging — this time driven by structural demand growth rather than pandemic-related disruptions.

9.1 Indium Phosphide Lasers

The most acute near-term constraint is indium phosphide (InP). InP is the material platform on which the key active components of coherent transceivers are built: electro-absorption modulated lasers (EMLs), continuous-wave (CW) laser sources, and ultra-high-power pump lasers. At least one major optical component supplier reported a severe supply imbalance in InP lasers in early 2026 as AI-driven demand surged. The InP optical lane volume demand for EML, CW, and ultra-high-power lasers is forecast to grow at approximately 85% CAGR from 2026 through 2030 — an extraordinary rate that demands aggressive capacity expansion from InP wafer manufacturers.

Expanding InP manufacturing capacity is a multi-year process. InP wafer fabs require specialized equipment and long process qualification cycles. The supply concentration is notable: InP wafer capacity is currently concentrated among a handful of manufacturers, meaning that any disruption to a single facility has disproportionate market impact. Several major photonics companies are investing heavily in InP capacity expansion — one is more than doubling its EML unit capacity by end of calendar year 2026 relative to the prior year baseline — but even with these investments, demand growth is likely to outpace supply additions in the near term.

9.2 Single-Mode Fiber

Single-mode fiber — the physical medium carrying all of this traffic — saw its price rise approximately 75% in January 2026, the highest single increase in seven years. This reflects both the volume of fiber being consumed in hyperscaler data center interconnection deployments and the supply constraints on fiber manufacturing. Corning signed a $6 billion multi-year fiber supply agreement with one hyperscaler, illustrating the scale at which these infrastructure commitments are being made. Fiber cable laying capacity — specifically the limited number of specialised cable laying ships for submarine deployments — is an additional constraint on the pace at which subsea capacity can be added globally.

9.3 WDM Pluggable Lead Times

Lead times for certain WDM pluggable modules had reached the 3-to-6-month range as of early 2026. Module vendors are finding it difficult to satisfy smaller customers — service providers, enterprises, and regional carriers — in the face of massive hyperscaler purchase orders that consume the majority of production. At least one major optical system vendor is essentially sold out for its 2026 allocation and has increased supply chain investment by 50% to address the constraint.

Supply chain lesson: The concentration of demand in a small number of very large customers creates a tiered supply environment in which smaller buyers are effectively locked out of near-term allocation. Network operators planning optical capacity additions in 2026 and 2027 should engage their suppliers early and be prepared for extended lead times on high-demand components including 800ZRx modules, pump lasers, and amplifier hardware for multi-rail line systems.

Regional Dynamics: North America Leads, China Contracts

The geographic distribution of optical hardware spending is shifting in ways that reflect the geography of AI infrastructure investment — concentrated in North America, growing in Europe, and declining in China.

10.1 North America

North America grew 30% in the fourth quarter of 2025 and 32% for the full year, reaching a record $6.4 billion. Long-haul surged 56% as hyperscalers built out inter-city data center interconnection for AI workloads. Cloud and co-location spending now accounts for 62% of regional optical hardware — a figure that would have been implausible five years ago when service providers dominated procurement. North America also accounts for the overwhelming majority of standalone WDM pluggable revenue. Supply chain constraints, not demand, are the limiting factor on further growth in the region.

The North America 5-year forecast was revised substantially upward in early 2026: 2026 growth is now projected at 21% (previously 14%), with a 5-year compound annual growth rate of 14.9% (previously 8.6%). The revision reflects the scale of hyperscaler AI infrastructure commitments, with aggregate 2026 capital expenditure from the major hyperscalers exceeding $600 billion — driven primarily by AI training and inference build-out.

10.2 EMEA

EMEA returned to growth in 2025 after three consecutive years of decline, expanding 14% in the fourth quarter and 12% for the full year to $3.4 billion. Long-haul increased 20%, driven by carrier network modernization and emerging hyperscaler data center interconnect demand in the region. The European market faces an additional tailwind from regulatory pressure: the EU Digital Networks Act, proposed in January 2026, would mandate the removal of high-risk vendors from European telecommunications networks, representing a multi-billion-euro optical hardware replacement opportunity for vendors qualified to serve the European market. EMEA's 5-year forecast compound annual growth rate was revised from 3.0% to 7.5%.

10.3 China

China presents a sharply contrasting picture. Optical hardware revenue fell 10% for full-year 2025 to $4.1 billion — the seventh consecutive quarter of year-over-year decline and the lowest annual total since 2018. The 5G infrastructure investment cycle that drove Chinese optical spending through the early 2020s has subsided. Carrier capital expenditure remains constrained. The government-driven East Data West Compute initiative that temporarily boosted long-haul investment in 2022 and 2023 has wound down. The China 5-year forecast is now negative, with a compound annual growth rate of -1.6%.

10.4 RoAPAC and India

The rest of Asia-Pacific declined modestly in 2025, but India represents a notable exception. Several major Indian industrial conglomerates have announced multi-gigawatt AI data center projects, positioning India for material optical infrastructure investment over the following several years as these facilities are built and interconnected. India's trajectory is one of the most interesting regional stories to watch through the balance of the decade.

Looking Forward to 2030

The optical transport industry in 2030 will look materially different from 2026. The trends in motion are directionally clear, even if specific timelines carry uncertainty.

11.1 Pluggables Will Dominate Bandwidth

Coherent pluggable optics already account for more than 50% of total WDM bandwidth shipped in 2025. By 2030, this is forecast to reach 80%, as improving performance at 800G and the introduction of 1600ZRx steadily narrow the addressable market for embedded optics to subsea and ultra-long-haul routes. The transition is not merely a form factor change — it represents a fundamental shift in who captures the economic value of coherent optics. Vertically integrated optical vendors are adapting by competing directly in the pluggable market with their own DSP designs and modules, rather than leaving that space to component specialists.

11.2 The Long Haul / Metro Divergence Widens

Long haul will exceed metro WDM revenue by $2.9 billion in 2029 — a gap that will likely continue to widen into 2030. The metro market will grow slowly, supported by pluggable transponders, terminal multiplexing equipment for scale-across termination, and public broadband funding for rural middle-mile networks. But the era of large-scale metro optical platform investment driven by service provider capex cycles is structurally constrained by IP-over-DWDM cannibalization.

11.3 Submarine Grows, Slowly

SLTE revenue will grow at a 7% compound annual growth rate through 2030, supported by hyperscaler submarine cable ownership and geopolitically driven route diversification. The fundamental constraint on faster growth is cable ship availability — there are a limited number of purpose-built cable laying vessels globally, and their schedule constrains the pace of new cable deployments regardless of demand or investment.

11.4 New Architectures at Every Layer

By 2030, the architectural pattern of optical transport will have completed a transformation. Inside data centers, optical circuit switching will handle GPU fabric interconnection in the largest AI facilities. Between data centers, scale-across optical networks will operate as purpose-built GPU communication infrastructure. Across regions, multi-rail line systems will carry hundreds of fiber pairs per amplifier site. The Shannon Limit will be approached on individual fiber pairs, making spatial diversity — more fibers, more cables — the primary mechanism for capacity growth. The industry has spent three decades squeezing more bits into each fiber. The AI era is also about building infrastructure for vastly more fibers.

Main Points — Section 11

- Coherent pluggables will carry approximately 80% of total WDM bandwidth by 2030, with embedded optics retreating to subsea and ultra-long-haul niches.

- The long-haul market will sustain growth through the period while metro WDM grows slowly, with the gap between the two segments widening each year.

- Multi-rail line systems, optical circuit switching, and full-band transponders represent new product categories that barely existed in 2024 and will be mainstream by 2030.

- Spatial diversity — more fibers, not more bits per fiber — becomes the dominant capacity scaling mechanism as the Shannon Limit is approached on individual fiber pairs.

- Supply chain expansion in InP, single-mode fiber, and laser manufacturing will determine whether demand is met at the pace AI infrastructure buildouts require.

Conclusion

Artificial intelligence has done something that two decades of incremental bandwidth growth and 5G build cycles never quite managed: it has made optical transport a strategic infrastructure question at the highest levels of corporate decision-making. Hyperscalers are not merely buying more of the same optical hardware — they are redefining what optical hardware is, what it does, and how it is built.

The evidence is visible in every dimension of the market. Long-haul DWDM, once the province of intercontinental carrier networks, is now the fastest-growing segment of optical hardware because of AI training workloads spanning geographically distributed data centers. Coherent pluggable optics, which barely existed at commercial volumes five years ago, already account for the majority of WDM bandwidth and are projected to reach 80% of the market by 2030. Multi-rail line systems — a product category that did not exist in any commercial form in 2024 — were demonstrated by multiple vendors at OFC 2026 and will be deployed by 2027. Optical circuit switching, once an academic curiosity, is running in production AI data centers.

For optical networking professionals, this transition demands fluency across multiple technology domains simultaneously: coherent DSP performance, pluggable module economics, line system amplifier design, and data center interconnect architecture. The boundaries that once separated transport networking from compute networking, and carrier networking from hyperscaler networking, are dissolving.

The supply chain is the near-term limiting factor, not the technology or the demand. Indium phosphide laser capacity, single-mode fiber production, and the manufacturing scale of 800ZRx modules are all being stretched by hyperscaler procurement at unprecedented volumes. The optical hardware industry will spend the next several years simultaneously growing into the demand that AI has created and building the supply infrastructure to sustain it.

What is clear is that the optical transport industry has entered a phase of change more profound than anything since the introduction of DWDM itself in the late 1990s. The physics of light in fiber remains the same. Everything else is being rebuilt.

Glossary

- 800ZRx

- Collective term for the family of 800G coherent pluggable modules including OIF-standard 800ZR and the higher-performing OpenZR+ variant (800ZR+, also called Gen120C), designed for data center interconnect and scale-across applications at distances up to approximately 150 km.

- All-Reduce

- A collective communication operation in distributed AI training in which every participating GPU sends data to all others and receives aggregated data in return. The most bandwidth- and latency-intensive operation in large-scale model training.

- C+L Band

- The combination of the conventional C band (approximately 1530–1565 nm, ~4.8 THz usable spectrum) and the L band (approximately 1565–1625 nm, ~4.8 THz additional spectrum) in a single optical line system, roughly doubling the available spectral capacity of a fiber pair.

- Compact Modular Hardware

- Optical transport platforms designed with data center physical characteristics — front-to-back airflow, AC power, 40°C operating temperature, greater chassis depth — rather than traditional telco central office specifications. Includes fixed-configuration 1RU devices and small modular chassis.

- Coherent Pluggable

- A coherent optical transceiver module in a standardized pluggable form factor (QSFP-DD, OSFP) that implements DWDM transmission and is interchangeable between host platforms from different vendors, enabling IP-over-DWDM and pluggable transponder architectures.

- IP-over-DWDM

- An architecture in which coherent DWDM optical interfaces are hosted directly within IP routers or white-box switches rather than in dedicated optical transport systems. The IP router handles both packet forwarding and wavelength transmission.

- Multi-Rail Line System

- An optical line system platform designed to amplify many parallel fiber pairs (rails) within a single line card or chassis, sharing common components such as optical spectrum analyzers and optical time-domain reflectometers across all rails to achieve high integration density at amplifier sites.

- Optical Circuit Switching (OCS)

- An all-optical switching technology that establishes and releases fiber-layer connections between network elements in the optical domain rather than the electronic domain, eliminating the need for optical-electrical-optical conversion at the switch fabric. Used in AI data centers for GPU cluster interconnection.

- Scale-Across

- A hyperscaler networking architecture in which GPU clusters located in geographically separate data centers are interconnected with dedicated, ultra-high-capacity optical links to function as a single unified compute fabric for distributed AI training and inference.

- Shannon Limit

- The theoretical maximum information capacity of a communication channel, set by the channel's bandwidth and signal-to-noise ratio (Claude Shannon, 1948). In optical fiber, the nonlinear Shannon Limit constrains per-fiber capacity to approximately 50 Tbps using C+L band at 16QAM modulation, making additional fibers (spatial diversity) the primary capacity scaling path beyond this point.

References

- OIF, "Optical Internetworking Forum — 400ZR and OpenZR+ Implementation Agreements," Optical Internetworking Forum.

- ITU-T GSTR.ION-2030 — International Optical Networks towards 2030 and Beyond (ION-2030), ITU-T Study Group 15.

- IEEE, "Optical interconnection networks for high-performance computing and data center systems," IEEE Journal of Selected Topics in Quantum Electronics.

- MEF, "Lifecycle Service Orchestration and Carrier Ethernet Standards," MEF Forum.

- Lumentum Operations LLC, "InP Capacity Leadership and EML Laser Shipments," OFC Investor Presentation.

- Ciena Corporation, "OFC 2026 Investor Tabletop — WaveLogic 6e and Scale-Across Optical Infrastructure," OFC.

- ITU-T G.694.1, "Spectral grids for WDM applications: DWDM frequency grid," ITU-T Study Group 15.

Sanjay Yadav, "Optical Network Communications: An Engineer's Perspective" – Bridge the Gap Between Theory and Practice in Optical Networking.

Developed by MapYourTech Team

For educational purposes in Optical Networking Communications Technologies

Note: This guide is based on industry standards, best practices, and real-world implementation experiences. Specific implementations may vary based on equipment vendors, network topology, and regulatory requirements. Always consult with qualified network engineers and follow vendor documentation for actual deployments.

Feedback Welcome: If you have any suggestions, corrections, or improvements to propose, please feel free to write to us at [email protected]

Optical Communications & Network Automation Expert | Author of 3 Books for Optical Engineers | Founder, MapYourTech

Optical networking engineer with nearly two decades of experience across DWDM, OTN, coherent optics, submarine systems, and cloud infrastructure. Founder of MapYourTech. Read full bio →

Follow on LinkedIn