HomePosts tagged “optical networking”

optical networking

Showing 1 - 10 of 12 results

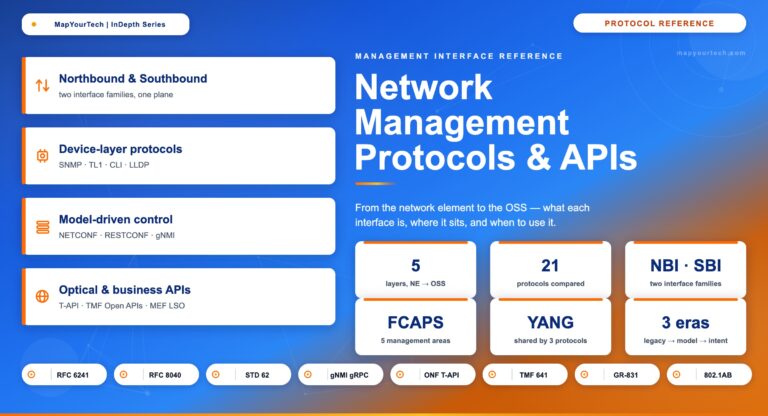

Network Management Protocols and APIs: An Introduction Protocols & APIs · NBI and SBI Network Management Protocols and APIs: An...

-

Free

-

May 29, 2026

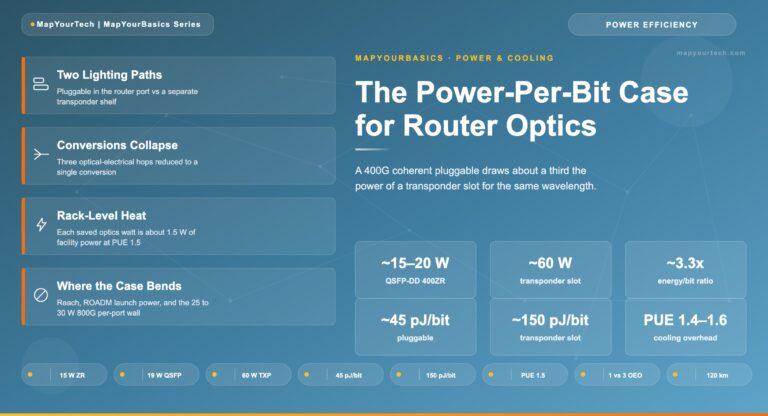

MapYourTech | MapYourBasics Series The Power-Per-Bit Case for Router Optics A 400G coherent pluggable in a router port draws roughly...

-

Free

-

May 21, 2026

Fibonacci and Golden Ratio in Optical Networking MapYourBasics Series The Fibonacci Sequence and the Golden Ratio in Optical Fiber Networking...

-

Free

-

May 2, 2026

ITU-T G.694.1 DWDM Channel Grid: Fixed Grid, Flexible Grid, and Frequency Calculation DWDM Standards · Comprehensive Guide ITU-T G.694.1 DWDM...

-

Free

-

March 9, 2026

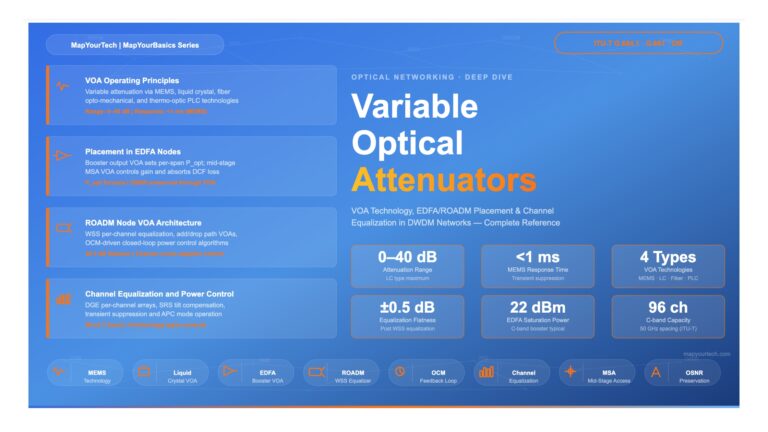

Variable Optical Attenuators (VOAs) in DWDM Systems | MapYourTech Optical Networking Variable Optical Attenuators (VOAs) in DWDM Systems A comprehensive...

-

Free

-

March 4, 2026

Optical Power Budgeting: Step-by-Step Guide | MapYourTech Optical Power Budgeting: Step-by-Step Guide Standards: ITU-T G.959.1 · G.698.2 · G.691 Scope:...

-

Free

-

March 1, 2026

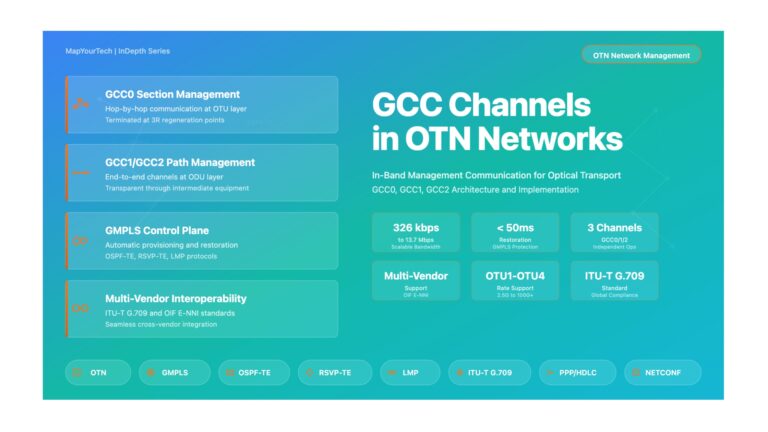

GCC Channels (GCC0/GCC1/GCC2) in OTN – Comprehensive Guide | MapYourTech GCC Channels in OTN Networks A Comprehensive Guide to General...

-

Free

-

December 30, 2025

Pluggable vs Embedded Optics: Which is Right for Your Network? | MapYourTech Pluggable vs Embedded Optics: Which is Right for...

-

Free

-

November 20, 2025

The integration of artificial intelligence (AI) into optical networking is set to dramatically transform offering numerous benefits for engineers at...

-

Free

-

March 26, 2025

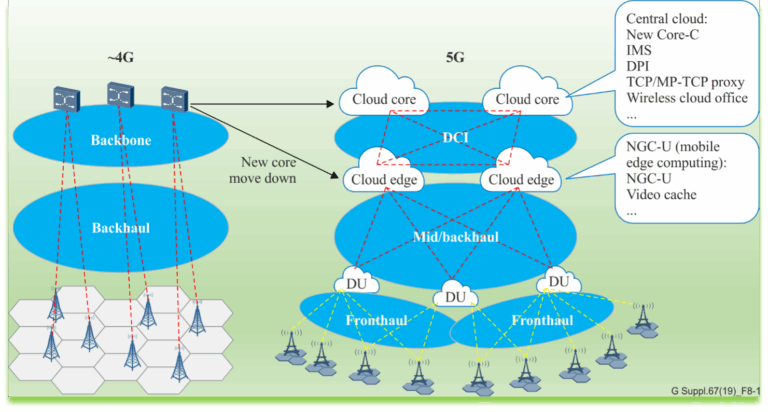

The advent of 5G technology is set to revolutionise the way we connect, and at its core lies a sophisticated...

-

Free

-

March 26, 2025

Explore Articles

- Analysis

- Automation

- Careers and Learning Paths

- Coherent Optics

- Data Center Interconnect

- Free

- Fundamentals

- Management

- Network Architecture

- Planning & Design

- Premium

- Professional Development

- Security

- Standards

- Submarine and Long-Haul

- Technical

- Testing

- Tools and Simulators

- Trends & News

- Troubleshooting and Operations

- Vendor and Product Landscape

Filter Articles

ResetExplore Courses

Tags

400ZR

automation

baud rate

behavioral

behavioral interview

ber

candidate

career

COHERENT

coherent optical transmission

coherent optics

data center interconnect

Data transmission

DWDM

edfa

EDFA noise figure

Fiber optics

Fiber optic technology

Forward Error Correction

hiring

Interview

modulation

network automation

noise figure

optical

Optical communication

Optical fiber

Optical network

optical network automation

optical networking

Optical signal-to-noise ratio

OSNR

OTN

preparation

Probabilistic Constellation Shaping

Q-factor

Raman amplification

recruiter

ROADM

Signal quality

Slider

spectral efficiency

STAR

submarine

Ticker