Continue Reading This Article

Sign in with a free account to unlock the full article and access the complete MapYourTech knowledge base.

Latency in Coherent Optical Systems

A comprehensive technical analysis of propagation delay, component contributions, application-specific budgets, and practical strategies for latency reduction in modern optical networks.

1. Introduction

2. Understanding Latency in Optical Networks

2.1 Definition and Units

Latency is the time elapsed for a data signal to travel from a transmitter to a receiver, or equivalently the end-to-end delay experienced by any given bit as it passes through the system. In optical networks it is most commonly expressed in microseconds (µs) or milliseconds (ms), though ultra-low-latency applications such as high-frequency trading require resolution in nanoseconds (ns).

Two related quantities are commonly specified together:

- One-way latency: The delay from transmitter output to receiver input across the full link. This is the figure used for real-time applications such as live video and 5G control signalling.

- Round-trip latency (RTT): Twice the one-way latency, measured when a signal is sent and a response is received. This is what application-layer protocols typically measure using ping or similar tools.

Latency and bandwidth are independent. A trans-Pacific cable can carry terabits per second (high bandwidth) while still imposing tens of milliseconds of one-way delay (high latency). Reducing latency requires reducing physical path length or processing overhead — bandwidth expansion does not help.

2.2 Components of Total System Latency

In a coherent optical system, the total one-way latency is the sum of several distinct contributions:

3. Factors Affecting Latency

3.1 Distance

The single largest contributor to latency in long-haul optical systems is the physical distance between endpoints. Light travels through silica glass fibre at approximately 204,000 km/s (versus 299,792 km/s in vacuum), imposing an inescapable propagation delay of approximately 4.9 µs per kilometre. This fundamental limit cannot be reduced by any amount of electronic optimisation — the only remedy is a shorter physical path, which is why route geometry is a primary consideration in low-latency network design.

3.2 Fibre Type and Quality

The refractive index of the fibre core determines how fast light travels through it. Single-mode fibre (ITU-T G.652) has a core refractive index of approximately 1.4677 at 1550 nm, giving a propagation speed of ~204,200 km/s. Multimode fibre, with a higher average refractive index due to its larger core, propagates light more slowly. For coherent long-haul systems, single-mode fibre is always used. Signal loss and chromatic dispersion affect signal quality but have negligible direct effect on propagation delay; however, the need for regeneration or dispersion compensation adds processing latency.

3.3 Optical Amplifiers

Erbium-doped fibre amplifiers (EDFAs) add a small but measurable amount of latency. The transit time through approximately 10 metres of erbium-doped fibre at the core of an EDFA corresponds to roughly 50 nanoseconds. In systems with 20 or more amplifiers, this can accumulate to a microsecond or more. Raman amplifiers, being distributed along the transmission fibre, add no discrete latency beyond the fibre propagation delay already accounted for.

3.4 DSP Processing

Modern coherent transceivers rely on sophisticated digital signal processors (DSPs) for modulation, demodulation, chromatic dispersion compensation, polarisation demultiplexing, carrier recovery, and forward error correction (FEC) decoding. Each of these functions introduces a pipeline delay. At 400G and beyond, the cumulative DSP pipeline latency in a single transceiver typically ranges from 1 µs to around 10 µs depending on the FEC algorithm, baud rate, and the number of DSP stages in the pipeline.

FEC is a particularly important contributor: more powerful codes such as enhanced soft-decision FEC involve iterative decoding that increases latency compared to simpler hard-decision schemes. This represents a design trade-off — lower FEC overhead gives lower latency but requires higher OSNR margin.

3.5 Switching and Routing Elements

Reconfigurable optical add-drop multiplexers (ROADMs) and optical cross-connects introduce latency through their liquid crystal on silicon (LCoS) or MEMS switching elements. A single ROADM node typically adds between 1 µs and 50 µs of latency depending on implementation. In a heavily interconnected mesh with many ROADM pass-throughs, this accumulates and may be significant for latency-sensitive applications.

3.6 Modulation and Demodulation

The process of encoding data onto a coherent optical carrier (I/Q modulation, polarisation multiplexing) and recovering it at the receiver (90-degree hybrid, balanced photodetection, analogue-to-digital conversion) each contribute latency. These contributions are embedded within the overall DSP pipeline delay and are typically reported together as the transceiver latency specification.

4. The Physics of Propagation Delay

4.1 Deriving the Fibre Propagation Delay

The speed at which light travels through an optical fibre is determined by the refractive index of the core material. From Snell's law and basic optics, the speed of light in any medium is:

Speed of Light in Fibrev = c / nWhere: v = speed of light in fibre (km/s) c = speed of light in vacuum = 299,792 km/s n = refractive index of the fibre core (dimensionless) G.652 SMF at 1550 nm: n ≈ 1.4677

The one-way propagation delay for a given link distance is then:

One-Way Fibre Propagation Delaytfibre (µs) = d (km) × n / c (km/s) × 106 = d × n / 299,792 × 106 ≈ d × 4.9 µs/km (for n = 1.4677) Round-trip delay: tRT = 2 × tfibre ≈ d × 9.8 µs/km

Some references cite a simplified formula of the form "latency = distance × n × 2" without dividing by the speed of light. This produces a dimensionally inconsistent result and gives incorrect values. Always use the physics-based formula above: t(µs) = d × n / 299,792 × 10⁶. For quick estimates, the industry rule of thumb is ~5 µs per km one-way for standard single-mode fibre.

4.2 Verified Example — Propagation Only

Given: d = 100 km, n = 1.4677 (G.652 SMF at 1550 nm), c = 299,792 km/s

One-Way Fibre Delaytfibre = 100 × 1.4677 / 299,792 × 106

= 146,770 / 299,792

= 489.6 µs ≈ 0.490 ms (one-way)

Round-trip: 2 × 489.6 = 979.2 µs ≈ 0.979 msThis confirms the industry rule of thumb: approximately 4.9 µs per km one-way, or roughly 1 ms of round-trip delay per 100 km of fibre.

5. Calculating Total System Latency

5.1 The Complete Formula

The total one-way latency of a coherent optical system is the sum of all component delays along the signal path:

Total One-Way System Latencyttotal = tfibre + tamplifiers + tROADM + tDSP + tmod + tdemod Where: tfibre = d × n / c × 106 (µs) tamplifiers = Namp × tper amp (typically 0.05–0.1 µs each) tROADM = NROADM × tper node (typically 1–50 µs each) tDSP = pipeline latency of coherent DSP (1–10 µs per transceiver) tmod/demod = included in DSP pipeline above

5.2 Worked Example

System parameters:

- Distance: 400 km total (5 × 80 km spans), single-mode fibre, n = 1.4677

- 5 EDFAs, per-amplifier transit delay: 0.08 µs each

- 2 ROADM pass-throughs: 5 µs each

- Coherent DSP pipeline (Tx + Rx combined): 8 µs total

tfibre = 400 × 1.4677 / 299,792 × 106 = 1,958.4 µs tamplifiers = 5 × 0.08 = 0.4 µs tROADM = 2 × 5.0 = 10.0 µs tDSP = 8.0 µs ───────────────────────────────────────────────────── ttotal = 1,976.8 µs ≈ 1.977 ms Round-trip = 2 × 1,976.8 = 3,953.6 µs ≈ 3.954 ms

Fibre propagation accounts for 99.1% of the total one-way latency in this example. At longer distances, this dominance increases further; at metro distances of 20–50 km, processing delays become a more significant fraction.

6. Live Latency Calculator

Adjust any slider to instantly recalculate the full latency breakdown and visualise the component contributions. The chart updates in real time as parameters change.

7. Latency in Application Contexts

The acceptable latency depends entirely on the application. The same physical infrastructure may be perfectly adequate for one use case and completely unsuitable for another. Understanding these requirements is essential for network dimensioning and route selection.

5G Fronthaul (eCPRI)

The 3GPP split architecture for 5G requires tight timing between distributed radio units and centralised baseband units. Fronthaul links are typically limited to distances of less than 10–20 km, and the allowed optical fibre budget is only a fraction of the total timing error allowed between the radio units and the network clock.

Data Centre Interconnect (DCI)

Metro DCI networks linking availability zones within a cloud region must achieve round-trip latencies of a few milliseconds or less to support synchronous data replication. This constrains the physical distance between data centres to roughly 100 km, limiting inter-site propagation to approximately 1 ms one-way.

High-Frequency Trading

Financial trading systems require the lowest achievable latency between exchange co-location facilities. Networks are routed along the most direct geographic paths, and low-latency transponders with minimal DSP pipeline depth are preferred over higher-capacity coherent systems that introduce more processing delay.

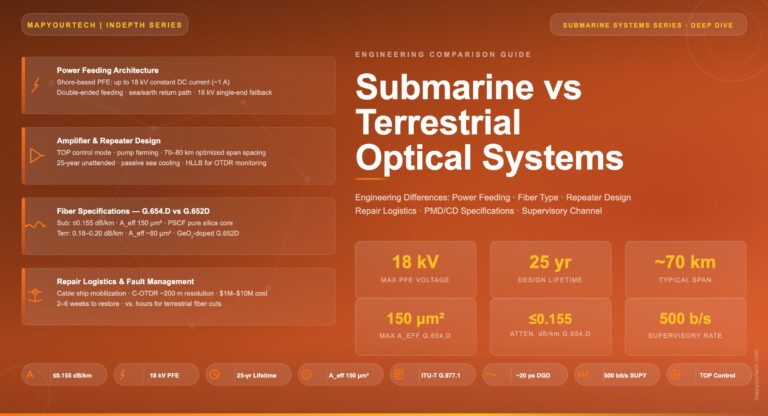

Submarine Long-Haul

Transoceanic submarine cable systems carry traffic with latencies dominated entirely by physical distance — a trans-Atlantic path of around 6,600 km imposes approximately 32 ms of propagation delay one-way. Latency cannot be reduced beyond the physical minimum set by the route length and fibre refractive index.

| Application | One-Way Latency Target | Max Distance Implied | Dominant Constraint |

|---|---|---|---|

| 5G Fronthaul (eCPRI) | <100 µs one-way | ~20 km (fibre only) | 3GPP timing budget; worsened by each ROADM hop |

| Metro DCI — sync replication | <a few ms RTT | ~100 km | Application read/write latency shared with networking |

| Enterprise WAN voice/video | <150 ms one-way | ~15,000 km | Human perception threshold for interactive audio |

| High-frequency trading | <100 µs one-way | ~20 km | Commercial advantage; every µs has economic value |

| OTN transport general | Application-defined | Unlimited | ITU-T G.709 defines OTU frame overhead latency contribution |

| Trans-Atlantic submarine | ~32 ms one-way | ~6,600 km | Physical path length — irreducible |

8. Strategies for Reducing Latency

8.1 Physical Path Optimisation

Because propagation delay dominates at distances beyond a few tens of kilometres, the single most effective latency reduction strategy is routing traffic along the shortest physical fibre path between endpoints. This is why low-latency networks are engineered with direct fibre routes — even at significant infrastructure cost — avoiding the longer paths that conventional carrier networks often take through intermediate cities and switching centres.

8.2 Fibre Selection

While all silica single-mode fibres have similar refractive indices (1.44–1.47 range), hollow-core fibres — where light travels through air rather than glass — offer a fundamental latency advantage. Light in air travels at approximately 99.7% of c, giving an effective refractive index close to 1.003 and reducing propagation delay by approximately 31% compared to conventional silica fibre. As hollow-core fibre becomes commercially available at scale, it will be adopted first in latency-critical applications such as financial trading interconnects.

8.3 Minimising Processing Hops

- Reduce ROADM pass-throughs: Each ROADM node adds 1–50 µs. Choosing express routes that bypass intermediate nodes reduces accumulated switching latency.

- Minimise electronic regeneration: Optical-electrical-optical regeneration sites add DSP pipeline latency at each site. Transparent optical routing avoids this penalty.

- Select lower-latency FEC: Hard-decision FEC with lower overhead (7%) introduces less pipeline latency than enhanced soft-decision FEC (20% overhead). For latency-critical applications where OSNR margin is available, simpler FEC is preferred.

- Use lower-order modulation: Higher-order modulation formats (DP-64QAM) sometimes require more DSP processing stages than simpler formats (DP-QPSK), adding pipeline depth.

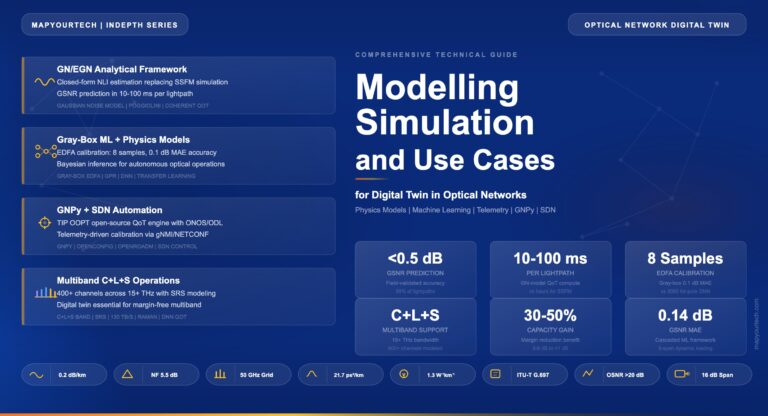

8.4 Latency-Aware Network Design

Modern optical network management systems can route traffic with explicit latency constraints alongside conventional capacity and protection constraints. Software-defined networking (SDN) controllers can compute latency-aware paths by incorporating per-link propagation delay models, switching node delays, and transceiver pipeline budgets into the path computation engine, selecting routes that meet latency service level agreements alongside other objectives.

For any latency-sensitive application, begin by calculating the irreducible propagation floor: the one-way delay imposed by the physical fibre path at approximately 4.9 µs/km. All other latency sources — amplifiers, ROADMs, DSP — must fit within whatever budget remains after this floor is subtracted from the application's latency requirement.

9. Latency and Jitter

Latency and jitter are related but distinct performance metrics, and they affect different applications differently.

Latency is the absolute delay — the time from when a bit enters the transmitter to when it exits the receiver. It is essentially fixed for a given path and system configuration, though it can vary slowly with temperature (fibre length changes slightly with temperature) or when the optical path is rerouted following a protection switch.

Jitter is the variation in latency over time. Packet delay variation (PDV), the preferred term in ITU-T G.8261, refers to the difference in end-to-end delay experienced by successive packets. In optical transport, jitter originates primarily from variable queuing and buffering delays in packet-layer equipment rather than from the optical layer itself, which contributes essentially fixed propagation delay. For synchronisation applications such as IEEE 1588 Precision Time Protocol (PTP), both absolute latency and jitter must be managed, since asymmetric latency between forward and reverse paths can cause timing errors.

| Property | Latency | Jitter (PDV) |

|---|---|---|

| Definition | Absolute end-to-end delay of a signal | Variation in delay between successive packets or symbols |

| Primary source in optical layer | Fibre propagation + processing pipeline | Packet buffering in IP/Ethernet layer; minimal in optical layer |

| Typical magnitude (optical) | µs to ms depending on distance | Nanoseconds to microseconds |

| Affected applications | All real-time applications; trading; 5G fronthaul | Synchronisation (PTP, SyncE); voice/video streaming |

| Reduction strategy | Shorter paths, fewer processing hops | Traffic shaping, timing protocol, packet buffering management |

| Relevant standard | ITU-T G.709, G.8271 | ITU-T G.8261, IEEE 802.1AS |

10. Frequently Asked Questions

11. Conclusion

Latency in coherent optical systems is governed primarily by the physics of light propagation through glass fibre — an irreducible constraint of approximately 4.9 µs per kilometre for standard single-mode fibre. Understanding this floor is the starting point for all latency engineering. The remaining contributions — amplifier transit times, ROADM switching delays, and DSP pipeline latency — are manageable through architecture choices, component selection, and route optimisation, but they are typically minor compared to propagation delay at distances beyond 30 km.

As applications become more latency-sensitive — from 5G URLLC to synchronous multi-site cloud computing — optical networks must be designed with explicit latency budgets assigned to each element of the signal path. The live calculator in this article provides a practical tool for that purpose, allowing engineers to explore how each parameter contributes to the total system delay and to identify which changes deliver the greatest latency benefit for a given design.

Looking ahead, hollow-core photonic bandgap fibre — which guides light through air rather than silica — promises to reduce propagation delay by approximately 31%, offering a step change in latency performance for applications where the physical path length cannot be shortened.

- Fibre propagation delay = d × n / c ≈ 4.9 µs/km one-way for G.652 SMF — this floor is irreducible by electronic means.

- Total system latency adds amplifier transit (<0.1 µs each), ROADM switching (1–50 µs each), and DSP pipeline (1–10 µs per transceiver end).

- Application latency targets range from <100 µs (5G fronthaul, HFT) to tens of milliseconds (long-haul enterprise) — each requires explicit budget allocation.

- Latency and jitter are distinct: optical propagation adds constant delay; jitter originates mainly in the packet layer above the optical transport.

- Hollow-core fibre will reduce propagation delay by ~31%, representing the next fundamental advancement in optical latency reduction.

Glossary

References

Note: This guide is based on industry standards, best practices, and real-world implementation experience. Specific values vary by equipment, fibre type, and deployment configuration. Consult qualified optical network engineers for actual system design.

Feedback Welcome: [email protected]

Optical Networking Engineer & Architect • Founder, MapYourTech

Optical networking engineer with nearly two decades of experience across DWDM, OTN, coherent optics, submarine systems, and cloud infrastructure. Founder of MapYourTech. Read full bio →

Follow on LinkedInRelated Articles on MapYourTech