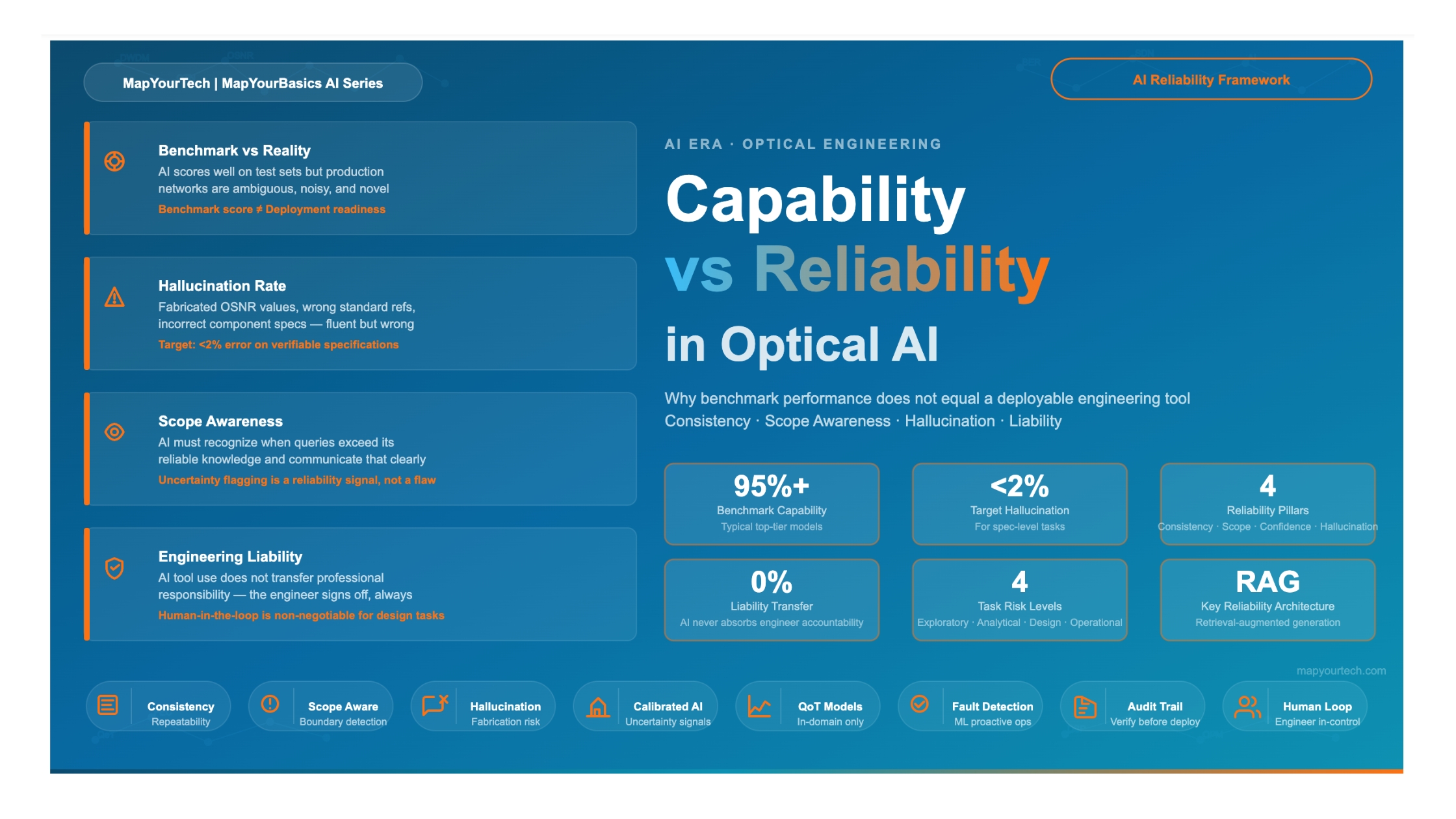

Capability vs Reliability:

The AI Distinction That Matters in Optical Engineering

Why a model that scores well on benchmarks is not the same as a deployable tool — covering consistency, scope awareness, hallucination rate, and liability in the context of optical network engineering.

1. Introduction

Optical networks carry the backbone of global communications — from terrestrial long-haul transport links to submarine cable systems spanning ocean floors. The decisions made during network planning, design, and operations have direct consequences: a miscalculated optical signal-to-noise ratio (OSNR) budget, an incorrect dispersion map, or an unsupported amplifier gain model can result in costly rework, service degradation, or outright system failure.

Artificial intelligence (AI) tools, and in particular large language models (LLMs) and machine learning (ML)-based assistants, are entering this domain at a rapid pace. Vendors, startups, and network operators alike are exploring how these tools can accelerate design cycles, automate fault analysis, and assist engineers in interpreting complex performance data. The marketing around these tools is compelling — benchmarks showing strong accuracy on technical question sets, demonstrations of rapid OSNR estimation, or examples of fault classification that would otherwise take an experienced engineer hours to complete.

However, there is a distinction that every optical engineer must understand before committing an AI tool to any production workflow: capability and reliability are not the same thing. A model that performs impressively on a benchmark dataset may behave very differently when posed with the nuanced, context-specific, often ambiguous questions that arise in real network engineering. The benchmark shows what an AI can do under controlled conditions. Reliability describes what it consistently does across the full range of scenarios an engineer will actually encounter.

This article examines that distinction in detail — what it means in the context of optical engineering, why it matters for deployability decisions, and how engineers and their organizations can develop a structured approach to evaluating AI tools before integrating them into technical workflows.

Scope of this Article

This article focuses on AI tools used in support of optical network engineering tasks: system design, optical performance monitoring (OPM), fault management, and planning assistance. The analysis applies broadly to LLM-based assistants, ML-based analytics platforms, and hybrid tools that combine trained models with structured domain knowledge.

2. Defining Capability and Reliability in the AI Context

2.1 What Capability Means for an AI Tool

In the context of AI evaluation, capability refers to the maximum observed performance of a model on a defined task under optimal conditions. Benchmarks measure capability. They are constructed to test specific skills — reading comprehension, mathematical reasoning, code generation, or domain knowledge — using curated question sets where correct answers are known and scoring is deterministic.

For optical engineering specifically, capability benchmarks might measure whether an AI tool can correctly answer questions about DWDM channel spacing per ITU-T G.694.1, estimate the theoretical Shannon limit for a given spectral efficiency, classify a set of BER (bit error rate) trends as indicating chromatic dispersion or PMD, or generate a properly structured optical link budget calculation.

Performing well on such benchmarks is genuinely meaningful. It shows that the underlying model has been exposed to, and can correctly recall or reason about, relevant technical content. The question is what that performance predicts about real-world use.

2.2 What Reliability Means for an AI Tool

Reliability is a different measurement entirely. It asks: across all the queries and scenarios an engineer will actually encounter — including ambiguous questions, edge cases, incomplete inputs, and novel configurations — how consistently does the tool produce correct, complete, and appropriately scoped outputs?

Four properties define reliability for AI tools in technical engineering contexts:

Consistency

The same question posed in different phrasings, or at different times, should produce the same correct answer. An inconsistent AI is not a reliable engineering reference, regardless of its benchmark score.

Scope Awareness

The tool should recognize the boundaries of its own knowledge. When it cannot answer with confidence, it must communicate that clearly rather than producing plausible-sounding but incorrect information.

Hallucination Rate

AI models can generate fluent, confident-sounding text that is technically incorrect. In optical engineering, a hallucinated OSNR value, a fabricated ITU-T standard number, or an incorrect component specification can propagate through a design with serious consequences.

Calibrated Uncertainty

A reliable AI tool expresses appropriate uncertainty. It should distinguish between what is established in a referenced standard, what is a typical industry value, and what is a model-generated estimate — treating these three categories very differently in its outputs.

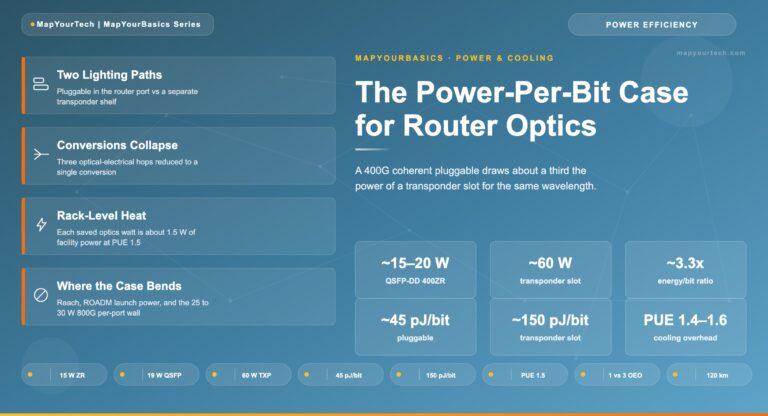

Figure 1: Capability vs Reliability — Illustrative Comparison Across AI Evaluation Dimensions

Figure 1: Radar chart comparing a hypothetical high-capability/low-reliability model versus a moderate-capability/high-reliability model across engineering deployment criteria. Values are illustrative for conceptual clarity.

3. Why Benchmark Performance Does Not Equal Deployability

3.1 Benchmark Conditions vs Real Engineering Conditions

A well-constructed benchmark evaluates an AI model under controlled conditions: questions are drawn from known domains, answers have definitive correctness criteria, and the scoring methodology is clearly defined. These conditions exist in direct tension with the conditions an optical engineer encounters in day-to-day work.

Real engineering queries are often incomplete. An engineer asking for help with a link budget might provide partial information — some fiber spans, some amplifier placements, but missing the connector loss budget or target BER. A real optical network has measurement uncertainties, aging components, and configurations that deviate from catalog specifications. The questions are not clean benchmark items. They are messy, context-dependent, and often require the AI to acknowledge what it cannot determine from the information provided.

Benchmarks also tend to test known-answer questions. They do not test the AI's behavior in genuinely novel scenarios, which is precisely where engineers most need reliable assistance. In optical engineering, novel scenarios arise constantly: new modulation formats, proprietary line system behaviors, or failure modes that do not match standard textbook patterns.

3.2 Distribution Shift: The Training-Deployment Gap

AI models learn from training data. The model's performance on a benchmark reflects its understanding of the data distribution that benchmark represents. When deployed in a real optical network environment, the queries and data the tool encounters may differ significantly from that training distribution — a phenomenon known as distribution shift.

For optical engineering, distribution shift is particularly relevant because the domain is specialized, evolving, and often characterized by proprietary configurations. A model trained on publicly available technical literature may have strong foundational knowledge but limited exposure to the specifics of a particular network operator's topology, a specific generation of line system hardware, or a non-standard amplification architecture used in a submarine deployment.

Distribution Shift Risk in Optical Tools

An AI tool that performs accurately on standard DWDM textbook problems may generate incorrect outputs when applied to a specific network's margin calculations, where proprietary EDFA gain flatness profiles, non-standard channel counts, or vendor-specific noise figure specifications deviate from the tool's training data assumptions.

3.3 Confidence Scoring Does Not Reflect Engineering Accuracy

Many AI models produce outputs with high linguistic confidence — well-formed sentences, technically appropriate terminology, and a presentation style that reads as authoritative — regardless of whether the underlying answer is correct. This is a well-documented property of large language models, and it presents a specific hazard in technical engineering contexts where the presentation quality of an answer is not a reliable indicator of its accuracy.

In optical network design, this can manifest as confidently stated but incorrect specifications. A model might confidently state a target OSNR value that is appropriate for a different modulation format, name a standard recommendation that does not exist, or produce a link budget calculation that has correct structure but incorrect numerical values in one or more terms. An engineer unfamiliar with the specific parameter being checked might accept the output without verification.

4. Hallucination Rate in Technical Domains

4.1 What Hallucination Means in Practice

AI hallucination refers to the generation of outputs that are fluent, plausible-sounding, and presented with confidence, but which are factually incorrect, fabricated, or based on incorrect reasoning. In general-purpose language tasks, hallucination can be an inconvenience. In optical engineering, it can be a serious problem.

The consequences of hallucination scale with how the output is used. An AI assistant used for initial orientation — helping an engineer quickly understand an unfamiliar concept — has a lower risk profile than one integrated into a design workflow that produces specifications directly used in equipment procurement or system configuration. As the tool moves closer to the output side of an engineering process, the acceptable hallucination rate drops toward zero for any specification that feeds into a deployment decision.

4.2 Categories of Hallucination Relevant to Optical Engineering

Several types of hallucination are particularly relevant to the optical networking domain:

Specification Hallucination

The model generates a plausible but incorrect numerical specification — for example, quoting an incorrect noise figure range for an EDFA amplifier type, or providing an incorrect chromatic dispersion coefficient for a fiber category. These errors are dangerous because the values look reasonable within the expected order of magnitude.

Standard Reference Hallucination

The model cites a standards document that does not exist, or attributes content to a standard that covers a different topic. For example, attributing a specific OTN frame structure detail to an incorrect ITU-T recommendation number, or referencing a non-existent OIF implementation agreement.

Procedural Hallucination

The model describes a troubleshooting or configuration procedure that contains one or more incorrect steps, interspersed with correct steps in a sequence that looks plausible. This is particularly hazardous because partially correct procedures can cause engineers to invest time implementing a flawed approach before discovering the error.

Causal Hallucination

The model incorrectly attributes a network behavior or impairment to the wrong cause. For example, identifying a BER degradation pattern as indicating cross-phase modulation (XPM) when the actual cause is stimulated Raman scattering (SRS), or misclassifying a hard failure signature as a soft failure.

Figure 2: Illustrative Hallucination Risk Profile by Task Category in Optical Engineering AI Tools

Figure 2: Relative hallucination risk across different task types. Risk increases as outputs approach actionable engineering specifications. Values are illustrative based on established AI reliability research patterns.

4.3 Factors That Increase Hallucination Risk

Several factors increase the probability of hallucination when using AI tools in optical engineering contexts:

Specificity of the query: General questions about optical networking principles are better covered in training data than highly specific questions about a particular network topology, equipment generation, or non-standard configuration. As queries become more specific, the model is more likely to extrapolate beyond its reliable knowledge.

Recency of the information: AI models have training cutoff dates. Standards, technology capabilities, and market-relevant specifications evolve. A model trained on data through a particular date will not have reliable knowledge of standards published, updated, or revised after that date — and may not accurately reflect the current status of standards that were updated near its cutoff.

Domain depth at the edge of training coverage: Topics that are well-represented in publicly available technical literature — foundational DWDM principles, standard OTN mapping, basic OSNR calculations — carry lower hallucination risk than topics at the edges of the model's training coverage, such as proprietary line system behaviors, advanced submarine equalization techniques, or very recent coherent DSP developments.

5. Scope Awareness: Knowing What You Do Not Know

Scope awareness is the property of an AI system that allows it to recognize when a query falls outside its reliable knowledge domain and to communicate that boundary clearly rather than generating speculative content with the same confidence it uses for well-grounded information.

For optical engineering, scope awareness is critical because the domain has multiple levels of knowledge. At the foundational level — fiber transmission physics, coherent modulation principles, OTN hierarchy, DWDM channel plans — the knowledge is well-documented, stable, and broadly available in training data. At the applied level — specific equipment behaviors, vendor-particular implementations, live network configurations — the knowledge is narrower, often proprietary, and varies substantially from one deployment to another.

5.1 The Danger of an Over-Confident AI in Engineering

An AI tool that lacks scope awareness will attempt to answer questions at both levels with similar confidence. When asked about a specific amplifier's gain tilt behavior at a particular operating point, a scope-unaware AI may generate a plausible-sounding answer based on general EDFA principles, without flagging that the specific values it provides are extrapolated rather than drawn from verified specifications for that equipment.

The risk compounds when engineers work under time pressure. In a network operations context, an engineer troubleshooting a degraded link at 2 AM does not have time to verify every AI output against original datasheets. If the AI tool does not clearly distinguish between "this is a standard industry principle" and "this is my best estimate for your specific configuration," the engineer may act on an estimate as if it were a confirmed specification.

5.2 How Scope-Aware AI Should Behave

A scope-aware AI tool, when faced with a query at the boundary of its reliable knowledge, should exhibit specific behaviors: it should flag that the query involves parameters that may be equipment-specific, vendor-specific, or otherwise outside its verified knowledge; it should provide the most applicable general principles while clearly labeling them as such; and it should direct the engineer to the appropriate authoritative source — a vendor datasheet, a specific ITU-T recommendation, or a manufacturer's configuration guide — rather than attempting to substitute for those sources.

This behavior is more valuable than superficially impressive performance on a benchmark. An AI that correctly answers 95 out of 100 benchmark questions but does not flag the 5 it is uncertain about is less useful in production than an AI that correctly answers 90 and clearly flags the remaining 10 as needing external verification.

Practical Scope Test for AI Tools

A useful evaluation technique is to deliberately pose questions to an AI tool that you know are either unanswerable from general knowledge (requiring specific vendor data) or questions that have changed recently (updated standards). A reliable tool will acknowledge uncertainty or flag its knowledge limits. A capability-only tool will generate a confident answer regardless.

6. Consistency: The Operational Reliability Test

Consistency is the most direct operational measure of AI reliability. It tests whether the same question — or semantically equivalent versions of the same question — reliably produces the same correct answer across repeated queries.

6.1 Why Inconsistency Is Unacceptable in Engineering Tools

Engineering tools must be deterministic in their outputs for any given set of inputs. A calculator that returns different values for the same link budget calculation on different occasions is not a calculator — it is an uncertainty generator. The same principle applies to AI tools used in optical engineering support functions.

Inconsistency in AI outputs stems from the probabilistic nature of large language models. These models do not look up answers in a database; they generate tokens based on learned probability distributions. This generative process introduces variability, particularly for questions that have multiple plausible continuations or that touch the boundaries of the model's training data.

For optical engineering applications, the following query types carry elevated inconsistency risk:

Numerical queries with multiple valid formats: Asking for an OSNR requirement expressed in dB for a 100G coherent PM-QPSK signal may produce different values depending on whether the model uses a target pre-FEC BER of 10-3 or 10-2, what FEC overhead assumption it applies, and what system margin convention it uses. All of these are legitimate engineering choices, but if the AI does not explicitly state its assumptions, an engineer receiving different answers at different times has no way to determine which answer is correct for their context.

Configuration queries: Asking how to configure a protection switching mechanism, how to set amplifier gain targets, or how to interpret an alarm code may produce procedurally different sequences across queries, particularly if the underlying topic is one where multiple approaches are valid.

6.2 Testing for Consistency

Engineers evaluating AI tools should run a consistency test as part of their evaluation process. This involves selecting 15 to 20 representative queries relevant to their workflow, posing each query to the tool multiple times with slight variations in phrasing, and comparing the outputs. The evaluation criteria should include: whether the same numerical values appear consistently, whether the same assumptions are stated explicitly, and whether the same caveats and scope limitations appear.

| Criterion | What to Test | Acceptable Signal | Risk Signal | Engineering Implication |

|---|---|---|---|---|

| Consistency | Same query, varied phrasing, repeated sessions | Same numerical outputs, same stated assumptions | Different values, no stated assumptions | Low trust for specification-level use |

| Scope Awareness | Queries requiring vendor-specific or recent data | Flags uncertainty, directs to authoritative source | Generates confident answer regardless | Not deployable for design decisions |

| Hallucination Rate | Known-answer queries with verifiable standards | <2% error on verifiable specifications | >5% fabricated or incorrect values | Requires human verification of all outputs |

| Calibrated Confidence | Mix of high-certainty and low-certainty queries | Linguistic confidence correlates with accuracy | Same confidence level for all outputs | Cannot be used without independent checks |

| Assumption Transparency | Calculations and design recommendations | All assumptions stated explicitly | Assumptions embedded but not stated | Results not reproducible or verifiable |

| Domain Boundary Handling | Novel configurations, edge cases | Acknowledges novelty, flags verification need | Generates answer as if standard case | False confidence in novel scenarios |

7. Engineering Liability and the AI Tool Decision

In professional engineering, the engineer signing off on a design, a specification, or a technical recommendation carries responsibility for that work. Regulatory frameworks and professional codes of practice in telecommunications engineering are clear: the use of an AI tool as part of a design workflow does not transfer responsibility from the engineer to the tool. The engineer remains accountable for the accuracy and appropriateness of the outputs.

This has a direct consequence for AI tool evaluation: the acceptable reliability threshold is not determined by the tool vendor's benchmark claims — it is determined by the risk profile of the task the tool is being used to support.

7.1 Task Risk Classification for AI Tool Use

A practical approach to managing AI tool liability in optical engineering is to classify tasks by their risk level and set corresponding requirements for AI tool reliability at each level:

| Risk Level | Task Examples | AI Tool Use Guideline | Human Verification Required |

|---|---|---|---|

| Exploratory Low Risk |

Learning a new technology, initial orientation, concept explanation | AI tool appropriate as primary resource; treat as reference, not specification | No mandatory verification for orientation tasks |

| Analytical Moderate Risk |

Preliminary link budget estimation, fault hypothesis generation, traffic analysis support | AI tool appropriate for draft outputs; all numerical values require engineer review | Engineer review of all numerical outputs before use |

| Design Elevated Risk |

Optical budget for live network deployment, amplifier gain plan, protection scheme selection | AI tool for acceleration only; all outputs must be independently verified against standards and datasheets | Mandatory independent verification; sign-off by qualified engineer |

| Operational High Risk |

Live network configuration, alarm response, maintenance window procedures | AI tool as advisory only; never as sole authority for any configuration change | Mandatory review; change management process applies |

7.2 The Verification Obligation

Where AI tools are used in tasks with engineering consequence — design calculations, operational procedures, or specifications that feed into procurement or deployment — the engineer's obligation is to verify the AI output against the appropriate authoritative source before acting on it. This means:

For standards-referenced content: checking the cited ITU-T, IEEE, OIF, or MEF document directly and confirming that the recommendation number, content, and applicability match what the AI has stated.

For equipment specifications: cross-referencing vendor datasheets, product documentation, or application notes rather than accepting AI-generated performance figures.

For calculated values: independently running the calculation — whether by hand, in a spreadsheet, or using a verified simulation tool — rather than accepting a single AI-generated result as authoritative.

Liability Reminder

No AI tool, regardless of its benchmark performance, eliminates the engineer's professional responsibility for the accuracy of specifications, designs, and recommendations that carry their name. "The AI told me" is not an acceptable defense in a root cause analysis following a service impact event. The engineer reviewing the AI output carries the accountability.

8. AI Applications in Optical Networks: Reliability Profiles

Optical network engineering has several well-established AI and ML application areas, each with its own reliability profile. Understanding these profiles helps engineers make informed decisions about where AI tools can be deployed with confidence and where additional verification is mandatory.

8.1 ML-Based Optical Performance Monitoring

Machine learning models for optical performance monitoring (OPM) are among the most mature AI applications in the optical domain. These models are trained on telemetry data from specific network elements and learn to detect patterns indicating impairments, degradation trends, or imminent failures. When deployed on data from the same network type and equipment generation as the training data, they demonstrate high consistency and measurable reliability because their performance can be continuously evaluated against observed outcomes.

Research in this area has demonstrated that ML-based fault prediction approaches can achieve high detection accuracy when applied to the equipment types and operating conditions represented in the training data. Studies have shown that models monitoring parameters such as optical power levels, laser bias current, and EDFA gain can predict board-level failures with accuracy rates above 90%, depending on the fault type and lead time.

The key reliability consideration for OPM applications is domain specificity: a model trained on one network's telemetry data should not be assumed to generalize to a different network's equipment without retraining or at minimum extensive validation. The reliability is tied to the deployment context matching the training context.

8.2 Quality of Transmission Estimation

Quality of Transmission (QoT) estimation — predicting whether a proposed optical path will achieve required performance before it is provisioned — is an active area for ML application. Traditional QoT tools use analytical models based on known impairments: chromatic dispersion, nonlinear effects, amplifier noise, and so on. ML approaches learn to predict QoT from training data that includes both the network parameters and the observed performance outcomes.

The reliability profile for ML-based QoT estimation is mixed. For paths that are similar to those in the training data — same fiber type, similar span lengths, same amplifier architecture — the models can achieve accuracy competitive with analytical tools and in some cases exceed them by capturing nonlinear interactions that are difficult to model analytically. For paths with characteristics outside the training distribution — unusual span configurations, different fiber categories, or higher-generation modulation formats not present in training data — the models' reliability degrades in ways that may not be visible in their output confidence signals.

8.3 AI-Assisted Fault Management

Fault management in optical networks involves detecting, classifying, localizing, and identifying the root cause of failures. AI tools are applied across this pipeline, from real-time alarm correlation to predictive maintenance of aging components. The reliability profile across this pipeline varies by task.

Alarm correlation: AI-based alarm correlation, which groups related alarms from a propagated fault condition and identifies the root event, has a reasonably well-defined reliability profile. The space of alarm correlation patterns is finite, training data from operational networks is available, and the tool's output can be evaluated against operator resolution records. Alarm correlation tools from established network management platforms have demonstrated consistent performance in reducing alarm noise and accelerating root cause identification.

Soft failure classification: Detecting and classifying soft failures — degradations that occur gradually rather than as hard outages — is more challenging from a reliability standpoint. Soft failures are by nature subtle, their signatures can overlap with normal performance variation, and the training data for soft failure categories may be sparse because these events are less frequent than hard failures. Research results in the published literature show strong accuracy on controlled test sets, but operational deployment requires careful validation against the specific network environment.

Predictive maintenance: ML-based predictive maintenance, which predicts component failure before it occurs based on parameter trends, is among the highest-value AI applications in optical networks and has demonstrated measurable operational benefits in tier-1 operator deployments. The reliability in production depends directly on the quality of the historical data used for training, the consistency of the telemetry collection infrastructure, and the degree to which operating conditions have remained stable since model training.

Figure 3: AI Application Reliability Maturity in Optical Network Engineering (As of 2025)

Figure 3: Relative deployment maturity and reliability of AI/ML applications in optical network engineering. Ratings reflect the degree of field validation and consistency of results in operational deployments, based on research literature and industry deployment reports as of 2025.

8.4 LLM-Based Engineering Assistants

Large language model-based assistants represent the newest category of AI tool entering optical engineering workflows. Unlike the ML applications above — which are trained on structured telemetry data for specific tasks — LLMs are trained on broad corpora of text and then adapted for technical domains. This gives them broad knowledge coverage but also makes their reliability profile fundamentally different.

LLMs excel at explanation, orientation, and synthesis — tasks where a good approximation to the correct answer drawn from general knowledge is useful and where errors can be caught by a knowledgeable engineer reviewing the output. They are less suited to tasks requiring precision-level numerical accuracy, tasks where the correct answer depends on equipment-specific or vendor-specific details not well-represented in their training data, and tasks where the consequences of a subtle error propagate through a downstream engineering process.

The reliability of LLM-based tools for optical engineering is also particularly sensitive to the staleness of training data. Optical technology evolves rapidly. Modulation formats, coherent DSP capabilities, line system amplifier performance, and network management standards are all areas where what was current two years ago may be materially different from what is deployed today. An LLM with a training cutoff that predates recent standards updates or technology generations may confidently describe a state of the art that is one or more technology cycles behind the current deployment environment.

9. A Practical Evaluation Framework for AI Tools in Optical Engineering

Given the considerations above, how should an optical engineering team evaluate an AI tool before deploying it in a workflow? The following framework provides a structured approach that moves beyond benchmark scores and into the operational reliability dimensions that matter in production.

9.1 Define the Use Case Precisely

Before evaluating an AI tool, the engineering team should precisely define the use case they are evaluating for. The reliability requirements for "help engineers understand optical impairment concepts" are fundamentally different from "assist in generating optical link budget calculations for new deployments." Defining the use case precisely allows the evaluation to focus on the reliability dimensions most relevant to that specific application.

9.2 Construct a Domain-Relevant Test Set

The evaluation should use a test set constructed from real engineering scenarios relevant to the organization's actual work, not from the AI vendor's published benchmark. This test set should include:

Questions with well-known, verifiable answers — to test baseline accuracy and hallucination rate. Questions that require the tool to acknowledge uncertainty — to test scope awareness. Questions posed in multiple phrasings — to test consistency. Questions at the edge of the tool's expected knowledge — to test behavior when operating outside reliable coverage.

9.3 Evaluate Assumption Transparency

For any AI tool being evaluated for use in calculations or design support, a key evaluation criterion is whether the tool explicitly states the assumptions underlying its outputs. An output of "the required OSNR is 15 dB" is far less useful than "assuming PM-QPSK modulation at 32 Gbaud, a pre-FEC BER target of 10-3, and a 7% FEC overhead, the required OSNR is approximately 15 dB; verify against your specific FEC implementation's sensitivity curve." The second output is both more transparent and more useful, because it allows the engineer to determine whether the assumptions match their context.

9.4 Run the Scope Boundary Test

Deliberately pose questions that are outside the tool's reliable knowledge domain and observe how it responds. Examples for optical engineering include: asking for the specific gain tilt specification of an amplifier type the tool is unlikely to have detailed data on; asking about the status of a standards activity that is recent or ongoing; or asking about a proprietary configuration that is organization-specific. A reliable tool will acknowledge the limits of its knowledge. A tool that responds with equal confidence to all queries, regardless of their verifiability, should be considered unsuitable for unverified use in production workflows.

9.5 Integrate Verification Into the Workflow

For any AI tool that meets the evaluation threshold for a specific use case, the final step is to integrate explicit verification checkpoints into the workflow that uses the tool. These checkpoints should be proportional to the risk level of the task. For exploratory tasks, a general sanity check by the engineer is appropriate. For design tasks, a structured verification step — checking AI outputs against the relevant standard, datasheet, or calculation tool — should be a mandatory part of the workflow documentation.

Section Summary

- Define the specific use case before evaluating an AI tool — generic benchmark scores do not predict task-specific reliability.

- Use a domain-relevant test set that includes questions requiring uncertainty acknowledgment, not just questions with known correct answers.

- Evaluate assumption transparency: outputs that state their assumptions are more useful and more verifiable than outputs that do not.

- Run a scope boundary test to evaluate how the tool behaves when asked questions outside its reliable knowledge domain.

- Build verification checkpoints into any workflow that uses AI outputs for engineering tasks with operational consequence.

10. AI Tool Integration Architecture for Optical Network Operations

When an AI tool has been evaluated and meets the reliability threshold for a defined use case, the next consideration is how to integrate it into the operational workflow in a way that preserves the engineer's ability to verify outputs and maintain accountability. The following diagram illustrates a recommended integration architecture that positions AI tools as assistants within a human-in-the-loop workflow rather than as autonomous decision-makers.

Figure 4: Recommended AI tool integration architecture for optical network engineering. The verify layer is non-optional for design and operational tasks.

11. Future Directions

The reliability gap between capability and deployability is an area of active work in the AI research and engineering communities. Several developments are likely to improve the reliability profile of AI tools for optical engineering over the coming years.

11.1 Retrieval-Augmented Generation

Retrieval-augmented generation (RAG) architectures address one of the primary sources of unreliability in LLM-based tools: outdated or incomplete training data. A RAG system augments the language model's generation process by retrieving relevant, verified information from a curated knowledge base — which can include up-to-date standards documents, vendor datasheets, and network-specific documentation — before generating a response. This allows the tool to ground its outputs in current, authoritative sources rather than relying solely on learned representations from training data.

For optical engineering applications, a well-implemented RAG architecture can substantially reduce specification hallucination and standard reference hallucination, because the tool retrieves the actual document text rather than generating from memory. The reliability of this approach depends on the quality of the underlying knowledge base — which must be accurately curated, regularly updated, and correctly indexed to return the most relevant material for a given query.

11.2 Domain-Specific Fine-Tuning and Validation

Fine-tuning AI models on optical networking domain data — standards documents, technical literature, operational data, and expert-reviewed Q&A pairs — can improve both capability and consistency within the domain. The key consideration for reliability is that fine-tuning must be accompanied by a rigorous evaluation process that tests not just benchmark performance but the scope awareness and calibrated uncertainty properties described earlier in this article.

11.3 Formal Uncertainty Quantification

Research in uncertainty quantification (UQ) for neural networks aims to give models the ability to produce calibrated confidence estimates alongside their outputs — not just a fluent answer, but a meaningful estimate of how likely that answer is to be correct. Progress in this area could allow AI tools in optical engineering to clearly distinguish between high-confidence outputs grounded in well-represented training data and lower-confidence outputs at the edges of their knowledge, making the verification burden more efficiently allocated.

11.4 Intent-Based Networking and AI Integration Standards

Standards bodies including the IETF, ITU-T, and MEF are actively developing frameworks for intent-based networking and AI-assisted network management. As these frameworks mature, they are expected to define requirements for the reliability, auditability, and human oversight of AI components in network operations systems. These standards developments will provide a more structured foundation for evaluating AI tools against defined requirements rather than relying on ad-hoc evaluation approaches.

12. Conclusion

The integration of AI tools into optical network engineering is not a question of if but how. The technology is advancing rapidly, and the potential benefits — in planning speed, operational efficiency, fault analysis depth, and predictive maintenance accuracy — are genuine. Engineers who develop the ability to evaluate and use these tools effectively will be better positioned than those who either avoid them entirely or adopt them without adequate scrutiny.

The central message of this article is that benchmark performance is a starting point for evaluation, not an endpoint. The distinction between what an AI model can do and what it reliably does across the full range of production engineering scenarios is the dimension that determines deployability. Consistency, scope awareness, hallucination rate, calibrated uncertainty, and assumption transparency are the properties that matter most for engineering reliability — and they are properties that can be tested systematically before committing a tool to a workflow.

Engineers evaluating AI tools should approach the process the same way they approach any other engineering system evaluation: define the requirements, build a relevant test protocol, run the evaluation against the actual use case rather than the vendor's benchmark, and document the results as part of the tool adoption record. Where tools meet reliability thresholds for specific use cases, integrate them with appropriate verification steps and clear accountability structures. Where they do not, use them for what they are reliably good at — exploration, orientation, and accelerating the preparatory work that precedes engineering decisions — while keeping the precision-level work in the hands of verified tools and qualified engineers.

The optical domain is too consequential and too specialized to accept AI capability on faith. The engineers who will deploy these tools most effectively are those who understand exactly where reliability ends and where engineering judgment must take over.

Article Summary

- Capability measures peak AI performance under controlled conditions; reliability measures consistent performance across all production scenarios. These are not the same metric.

- Four properties define AI reliability in engineering: consistency, scope awareness, hallucination rate, and calibrated uncertainty. All four must be evaluated, not just task accuracy.

- Hallucination in optical engineering is particularly hazardous when AI tools are used for specifications, standard references, or procedural guidance that feeds into deployment decisions.

- The verification obligation stays with the engineer. AI tool use does not reduce professional accountability for technical outputs.

- Reliability requirements scale with task risk: exploratory tasks have lower requirements than design or operational tasks, which require verified outputs and engineer sign-off.

References

- ITU-T Recommendation G.694.1 – Spectral grids for WDM applications: DWDM frequency grid.

- ITU-T Recommendation G.709 – Interfaces for the optical transport network (OTN).

- ITU-T Recommendation G.975.1 – Forward error correction for high bit-rate DWDM submarine systems.

- OIF Implementation Agreement OIF-400ZR-01.0 – 400ZR Implementation Agreement for 400G DWDM coherent optics.

- IEEE 802.3ct – PHY specifications and management parameters for 100 Gb/s operation over DWDM systems.

- J. Mata, I. de Miguel, R.J. Durán, N. Merayo, S.K. Singh, A. Jukan, et al., "Artificial intelligence (AI) methods in optical networks: a comprehensive survey," Optical Switching and Networking, 2018.

- F.N. Khan, C. Lu, A.P.T. Lau, "Optical performance monitoring in fiber-optic networks enabled by machine learning techniques," Proc. OFC, 2018.

- D. Rafique, T. Szyrkowiec, A. Autenrieth, J.-P. Elbers, "Analytics-driven fault discovery and diagnosis for cognitive root cause analysis," Proc. OFC, 2018.

- Sanjay Yadav, "Optical Network Communications: An Engineer's Perspective" – Bridge the Gap Between Theory and Practice in Optical Networking.

Developed by MapYourTech Team

For educational purposes in Optical Networking Communications Technologies

Note: This guide is based on industry standards, best practices, and real-world implementation experiences. Specific implementations may vary based on equipment vendors, network topology, and regulatory requirements. Always consult with qualified network engineers and follow vendor documentation for actual deployments.

Feedback Welcome: If you have suggestions, corrections, or improvements to propose, please write to us at [email protected]

Optical Communications & Network Automation Expert | Author of 3 Books for Optical Engineers | Founder, MapYourTech

Optical networking engineer with nearly two decades of experience across DWDM, OTN, coherent optics, submarine systems, and cloud infrastructure. Founder of MapYourTech. Read full bio →

Follow on LinkedInRelated Articles on MapYourTech